Author: MOKEMI TCHAKOUTE BAUTREL Duval

Date: March 2026

DocuChat-RAG is an intelligent conversational assistant based on search augmented generation (RAG), designed to answer questions using exclusively your personal documents. Unlike general chatbots that can produce erroneous or speculative answers, this assistant is constrained to respond only from indexed documents, making it ideally suited for domain-specific applications or those subject to compliance requirements.

The system enhances reliability through:

Users can interact with the chatbot via a simple and intuitive command-line interface (CLI).

This publication provides comprehensive instructions on the architecture, operation, and management of the RAG-based chatbot system. It details all the essential components:

In addition to describing the architecture, this document includes instructions for running the application, configuration options, and a test dataset to validate system behavior. It also outlines current limitations and areas for future improvement.

The dataset used for this application is a collection of 7 carefully selected text documents , covering a variety of current topics:

| File | Thematic | Main content |

|---|---|---|

artificial_intelligence.txt | Artificial intelligence | Principles of AI, Machine Learning, Deep Learning, NLP, AI Ethics |

biotechnology.txt | Biotechnology | CRISPR, Synthetic biology, Personalized medicine, Regenerative medicine, Bioethics |

climate_science.txt | Climate Sciences | Climate change, Greenhouse effect, Ecological impacts, Renewable energies, Adaptation |

quantum_computing.txt | Quantum Computing | Quantum principles (superposition, entanglement), Quantum algorithms, Quantum hardware, Applications |

space_exploration.txt | Space Exploration | Solar system, Martian exploration, International Space Station, Future of space travel, Exoplanets |

sustainable_energy.txt | Sustainable Energy | Solar energy, Wind energy, Energy storage, Smart grids, Green hydrogen |

sample_documents.txt | Data Science | Data science methodology, Feature engineering, Model evaluation, MLOps, Visualization |

Together, these files form an encyclopedic and multidisciplinary knowledge base, enabling the assistant to answer a variety of questions in cutting-edge scientific and technological fields.

| Component | Technology | Role |

|---|---|---|

| Language | Python 3.9+ | Primary programming language |

| Vector Database | ChromaDB | Semantic storage and retrieval |

| Embeddings | Sentence Transformers (all-MiniLM-L6-v2) | Text to vector conversion (384 dimensions) |

| LLM | OpenAI / Groq / Gemini | Response generation |

| Framework | LangChain | RAG orchestration and memory management |

| Interface | CLI (Python) | Command-line interaction |

┌─────────────────────────────┐

│ USER │

│ Ask a Question │

└──────────────┬──────────────┘

│

▼

┌─────────────────────────────┐

│ QUERY EMBEDDING │

│ Sentence Transformers │

│ (all-MiniLM-L6-v2) │

└──────────────┬──────────────┘

│

▼

┌─────────────────────────────┐

│ VECTOR SEARCH │

│ ChromaDB │

│ Top-K Semantic Retrieval │

└──────────────┬──────────────┘

│

▼

┌─────────────────────────────┐

│ RETRIEVED DOCUMENTS │

│ Relevant Chunks │

│ (chunk_size=500, overlap=50)│

└──────────────┬──────────────┘

│

┌────────────────┴────────────────┐

▼ ▼

┌───────────────────────┐ ┌─────────────────────────┐

│ CONVERSATION MEMORY │ │ PROMPT │

│ │ │ CONSTRUCTION │

│ Windowed Memory (5) │ │ Context + Question │

│ JSON Chat History │ │ Memory + Instructions │

└─────────────┬─────────┘ └──────────────┬──────────┘

│ │

└──────────────┬───────────────────┘

▼

┌─────────────────────────┐

│ LLM │

│ OpenAI / Groq / Gemini│

│ Grounded Generation │

└─────────────┬───────────┘

│

▼

┌─────────────────────┐

│ FINAL ANSWER │

│ Grounded Response │

└─────────────────────┘

| Component | Description |

|---|---|

| RAGAssistant | Main class that orchestrates the end-to-end flow of requests |

| VectorDB | Handles ingestion, chunking, and semantic searching via ChromaDB |

| WindowedFileChatHistory | Custom memory: window of the last 5 exchanges + JSON persistence |

| Multi-LLM Support | Integration of multi-vendor models (OpenAI, Groq, Gemini) |

| Prompt Template | Structured template with hallucination prevention constraints |

| CLI Interface | Command-line interface for interactive testing |

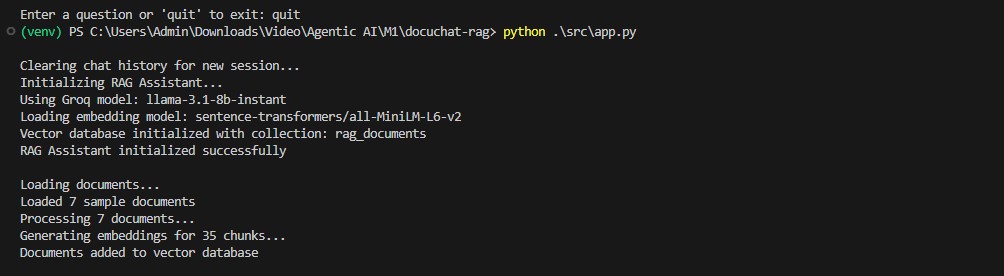

When the application starts, the system automatically loads all .txt documents present in the data/ directory.

Ingestion pipeline:

RecursiveCharacterTextSplitter (chunk_size=500, overlap=50)all-MiniLM-L6-v2

Document search uses cosine similarity with HNSW (Hierarchical Navigable Small World) indexing for instant search.

| Setting | Value | Role |

|---|---|---|

| top_k | 3 | Number of most relevant chunks to return |

| distance_threshold | 0.5 | Maximum distance threshold for validation |

The application implements custom memory via WindowedFileChatHistory:

class WindowedFileChatHistory(FileChatMessageHistory): """Retourne seulement les 5 derniers échanges au LLM, mais persiste tout l'historique dans un fichier JSON.""" def __init__(self, file_path: str, k: int = 5): super().__init__(file_path) self.k = k @property def messages(self): all_messages = super().messages # Garde les derniers k échanges (k*2 messages) return all_messages[-(self.k * 2):]

Benefits:

✅ Limited context = constant token cost

✅ Complete persistent history (JSON)

✅ Automatic reset for each test session

The application automatically detects the available API key from among:

OpenAI: GPT-4o, GPT-3.5-Turbo

Groq: Llama-3, Mixtral

Google: Gemini Pro

The prompt system includes strict constraints:

Constraints:

All parameters are configurable via environment variables (.env):

LLM Supplier Selection

Distance thresholds and top_k

Memory window size (k)

Logging levels

Data flow

Document ingestion flow

┌───────────────────────┐

│ data/ directory │

│ Raw Text Documents │

│ (.txt files) │

└───────────┬───────────┘

│

▼

┌───────────────────────┐

│ Document Loading │

│ UTF-8 File Reader │

└───────────┬───────────┘

│

▼

┌───────────────────────┐

│ Text Chunking │

│ chunk_size = 500 │

│ overlap = 50 │

└───────────┬───────────┘

│

▼

┌───────────────────────┐

│ Embedding Generation │

│ SentenceTransformers │

│ 384-dim vectors │

└───────────┬───────────┘

│

▼

┌───────────────────────┐

│ ChromaDB │

│ Vector Store │

│ Persistent Storage │

└───────────────────────┘

Request processing flow

┌───────────────────────┐

│ User Query │

└───────────┬───────────┘

│

▼

┌───────────────────────┐

│ Query Embedding │

└───────────┬───────────┘

│

▼

┌───────────────────────┐

│ Vector Search │

│ ChromaDB │

│ top_k = 3 │

└───────────┬───────────┘

│

▼

┌───────────────────────┐

│ Retrieved Context │

└───────────┬───────────┘

│

▼

┌───────────────────────────────┐

│ Prompt Assembly │

│ (Context + User Question) │

└───────────┬───────────────────┘

│

▼

┌──────────────────┐

│ LLM Generation │

│ (Grounded Answer)│

└──────────┬───────┘

▼

┌────────────────┐

│ Response │

└────────────────┘

Conversation Memory

(last 5 exchanges)

│

└────────────► Injected into Prompt

Example of interaction

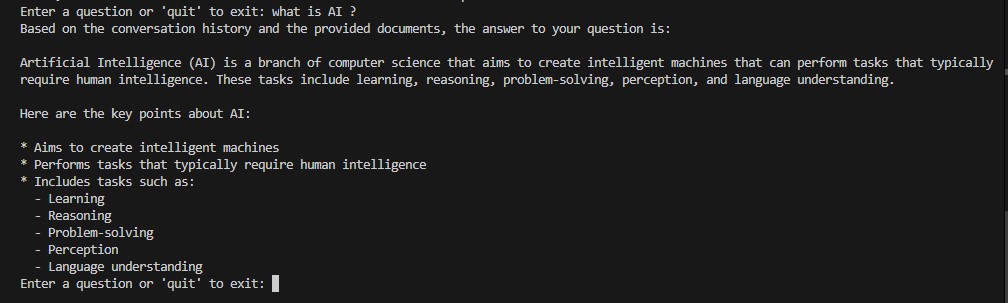

Example 1: Artificial Intelligence

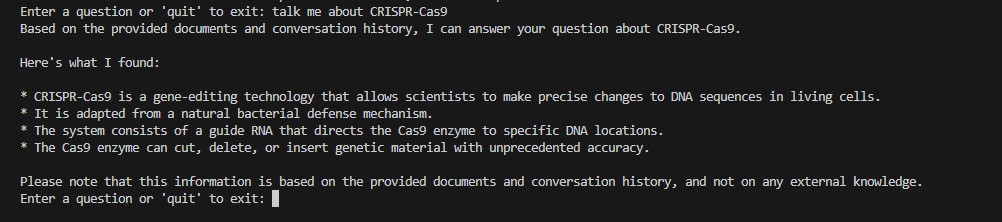

Example 2: Follow-up questions (memory test)

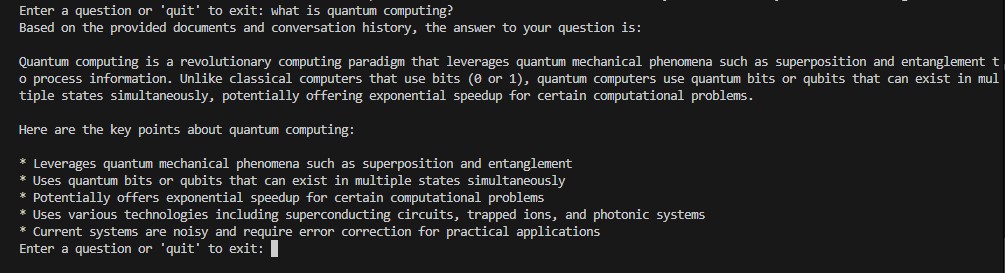

Example 3: Quantum Computing

Follow these steps to install and run DocuChat-RAG on your machine.

git clone https://github.com/Mcduval/docuchat-rag.git cd docuchat-rag

python -m venv venv source venv/bin/activate # Linux/Mac venv\Scripts\activate # Windows

pip install -r requirements.txt

cp .env.example .env

Edit .env with your key (OpenAI, Groq or Google)

Place your .txt files in the data/ folder

python src/app.py

Here are some examples of questions to ask the assistant, organized by topic:

| Question |

|---|

| "What is Deep Learning?" |

| “Explain Natural Language Processing to me” |

| “What are the ethical challenges of AI?” |

| “What is the difference between supervised and unsupervised learning?” |

| Question |

|---|

| "How does CRISPR-Cas9 work?" |

| “What is personalized medicine?” |

| "Explain synthetic biology" |

| “What are the ethical considerations in biotechnology?” |

| Question |

|---|

| “What is the greenhouse effect?” |

| “How does climate change affect ecosystems?” |

| “What are the main renewable energy solutions?” |

| “What is the difference between climate adaptation and mitigation?” |

| Question |

|---|

| “What is quantum superposition?” |

| "What is Shor's algorithm used for?" |

| “What are the applications of quantum computing?” |

| “What are qubits and how do they differ from classical bits?” |

| Question |

|---|

| “What are the goals of Mars exploration?” |

| “What is the International Space Station?” |

| “How do scientists discover exoplanets?” |

| “What are the terrestrial planets in our solar system?” |

| Question |

|---|

| "How does solar energy work?" |

| "What is a smart grid?" |

| “Why is energy storage important for renewable energy?” |

| “What is green hydrogen and how is it produced?” |

| Question |

|---|

| “Explain the CRISP-DM methodology” |

| “What is feature engineering?” |

| “How do you evaluate a machine learning model?” |

| "What is MLOps?" |

| Scenario | Description |

|---|---|

| CRISPR Follow-up | “Tell me about CRISPR” → “Who discovered it?” → “What are its medical applications?” |

| Quantum Computing Follow-up | “Explain quantum computing” → “What makes it different from classical computing?” → “What are the current hardware limitations?” |

| Limit | Description |

|---|---|

| 📄 Limited format support | Only .txt files are supported. |

| 🖥️ No web interface | CLI interface only (no Streamlit) |

| 👥 No multi-sessions | Only one conversation history at a time |

| ☁️ LLM cloud only | No support for local models (Ollama, LM Studio) |

| Functionality | Status |

|---|---|

| 📄 Support PDF, Markdown, DOCX | 📋 Planned |

| 🖥️ Web interface (Streamlit) | 📋 Planned |

| 👥 Multi-user sessions | 📋 Planned |

| 💻 Local LLM (Ollama, LM Studio) | 📋 Planned |

| 🔍 Hybrid search (BM25 + vector) | 📋 Planned |

| 📤 Export of conversations (PDF, TXT) | 📋 Planned |

| Contact information |

GitHub: https://github.com/Mcduval

LinkedIn: https://www.linkedin.com/in/mokemi-tchakoute-bautrel-duval

Email: bautrelduval@gmail.com

License

This project is distributed under the MIT license