Before we build voice clones, ASR systems, or deploy AI that understands Yoruba tones… we must first answer a deceptively simple question: What is sound and how does a machine hear it?

Without the understanding of the physics of sound, our Speech AI intuition will be weaker. Even if modern deep learning handles raw waveforms end-to-end, knowing why the signal looks the way it does will make debugging and research much easier.

We will see what sound really is, How we hear sound, How sounds are digitalized.

What Sound Really Is

Sound is not an MP3 or WAV file, those are just data. Real sound exists in the physical world.

“Sound is movement” But movement of what? Air

More precisely: Sound = vibration of air pressure over time

Sound as Air Pressure Waves

Let’s break down each term:

- What is Air? Air is made of tiny particles (molecules) like oxygen and nitrogen. These particles are always moving, bouncing and colliding with everything. Even right now, air particles are hitting your skin.

- What is Pressure? How hard something is pushing on something else. If you press your hand on a table, that’s pressure.

- What is Air Pressure? How hard air molecules are pushing on a surface. Those tiny moving air particles constantly hit your skin, walls, ground, everything. Even right now. You just don’t feel it because: The pressure inside your body pushes outward, the air outside pushes inward and they balance

- Why Sound Needs Air (or Another Medium)? Sound is vibrating air molecules, a repeating pattern of compression (molecules crowded) and rarefaction (molecules spread apart). Without molecules to push against each other, this pattern cannot exist. Therefore, sound cannot travel in a vacuum. The atmosphere is crucial: it gives the air molecules their “default balance,” allowing vibrations to propagate.

- What is Vibration? A repeated back-and-forth motion around a resting position. Not just movement or shaking. But movement that goes one way, then the other way, over and over again. Every object has a position where it naturally sits. Example: A guitar string at rest or Your vocal cords when you’re silent. That neutral point is called its rest position. So If you pluck a guitar string It goes forward → comes back → goes forward → comes back.

How Vibration Produces Sound

When something vibrates:

- It disturbs nearby air molecules

- Molecules get crowded → compression

- Molecules spread out → rarefaction

- This pattern repeats, forming a traveling wave of air pressure

So when you hit a drum or clap your hands you are essentially disturbing the air pressure which is actually vibrating air molecules which in turn vibrates surrounding air molecules(how it travels) like a ripple in water.

Important: The air molecules themselves don’t move far; the pressure wave moves through them, like a ripple in water.

Characteristics of Sound

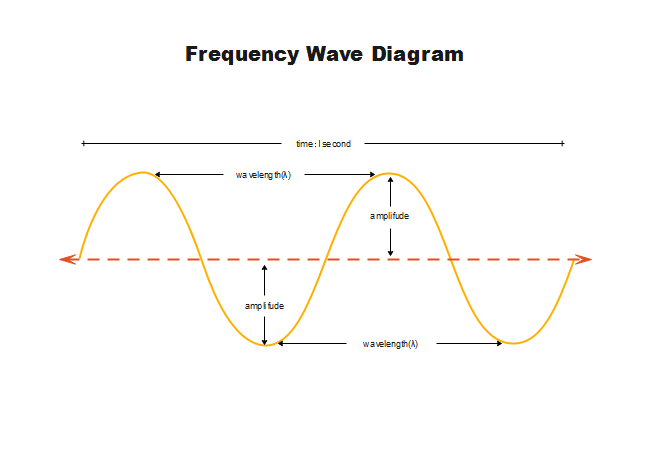

Frequency

- Frequency (Pitch): How fast something vibrates. It determines whether a sound is high or low.

- Faster vibrations → higher pitch (like a whistle or a bird chirping).

- Slower vibrations → lower pitch (like a drum or a bass voice).

Example: When you speak, your vocal cords vibrate about 100–300 times per second (Hz), giving your voice its pitch.

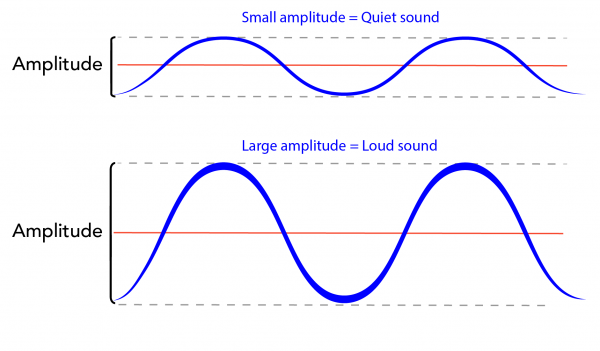

Amplitude

- Amplitude (Loudness): How big the vibrations are, basically, how much the air moves. It determines how loud or soft the sound is.

- Large vibrations → louder sound.

- Small vibrations → quieter sound.

Think of it as: The difference between whispering and shouting.

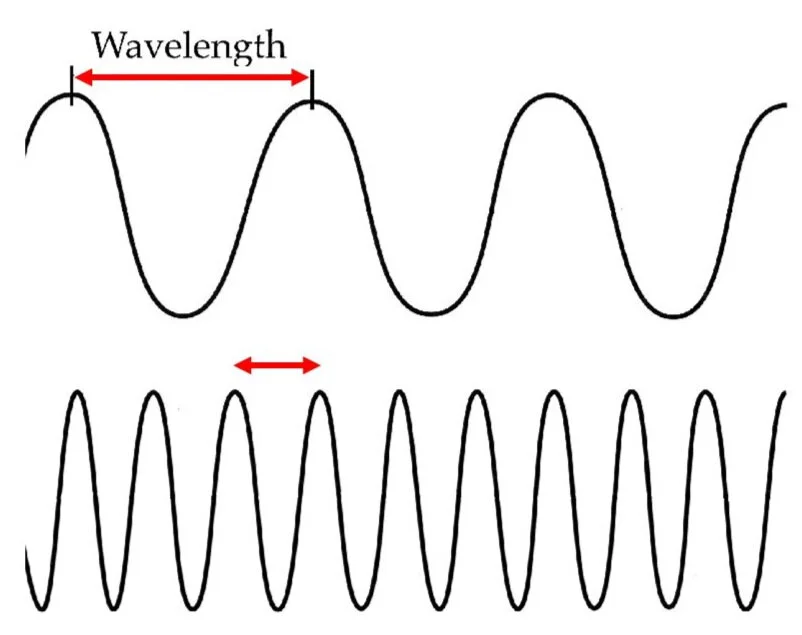

Wavelength

- Wavelength: The distance between one wave crest and the next (or one compression and the next).It is related to frequency:

- Short wavelength → high frequency → high-pitched sound.

- Long wavelength → low frequency → low-pitched sound.

Think of it as: The “spacing” of the sound waves traveling through air.

Waveform

- Waveform (Timbre or Quality): The unique shape of the sound wave. It determines the character of a sound, even if pitch and loudness are the same.

- Example: Unique waveform shapes explain why your voice sounds different from a friend’s or why a guitar sounds different from a piano.

Think of it as: The “color” or “texture” of the sound, what makes your voice or an instrument recognizable.

Note: A good way to understand this better is trying out this interactive tool https://academo.org/demos/virtual-oscilloscope/

How Humans Hear Sound

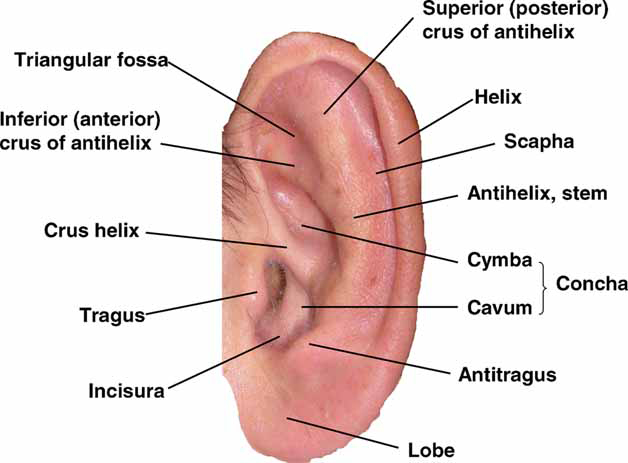

1. Outer Ear: Capturing Sound Waves

- Structure: The outer ear consists of the pinna (the visible part) and the ear canal.

- Function:

- The pinna acts like a funnel, capturing sound waves and directing them into the ear canal.

- Sound waves travel down the canal and reach the eardrum (tympanic membrane).

- Analogy: Like a satellite dish collecting signals.

Key idea: The eardrum vibrates exactly like the air pressure waves that hit it—high pressure → pushes eardrum in, low pressure → pulls it out.

2. Middle Ear: Amplifying Vibrations

- Structure: Three tiny bones called ossicles – malleus (hammer), incus (anvil), stapes (stirrup).

- Function:

- They amplify the eardrum’s vibrations, making sure enough energy is transferred into the fluid-filled inner ear.

- The stapes pushes on the oval window, the entrance to the cochlea.

- Analogy: Think of a tiny sound hitting a microphone. The microphone converts it into a small electrical signal. Then, an amplifier boosts the signal so that a speaker can produce loud sound. The ossicles are like this amplifier; tiny vibrations in air become strong enough to move the cochlear fluid.

3. Inner Ear – Converting Vibration to Fluid Waves

- Structure: The cochlea, a spiral-shaped tube filled with fluid.

- Function:

- Vibrations from the stapes create waves in the cochlear fluid.

- The basilar membrane inside the cochlea responds differently depending on frequency:

- High-frequency sounds → vibrate the base of the cochlea.

- Low-frequency sounds → vibrate the apex.

- Analogy: Like a piano keyboard inside your ear, where different keys respond to different pitches.

4. Hair Cells – Turning Motion Into Electricity

- Structure: Tiny sensory cells with hair-like projections called stereocilia.

- Function:

- As the basilar membrane moves, stereocilia bend.

- This opens ion channels, releasing neurotransmitters.

- Neurotransmitters generate electrical impulses in the auditory nerve.

- Analogy: Like sensors converting water ripples into electrical signals.

5. Auditory Nerve and Brain – Making Sense of Sound

- Pathway: Electrical signals travel through the auditory nerve → brainstem → auditory cortex in the temporal lobe.

- Brain functions:

- Determines pitch (frequency of vibrations).

- Determines loudness (amplitude of vibrations).

- Recognizes timbre (waveform patterns).

- Locates the direction of the sound in space.

- Analogy: Your brain is like a music producer, taking raw waveform data and turning it into a recognizable sound, a word, or a melody. Your brain knows the waveform of your friend’s voice and that is why you are able to recognize their voice.

Digitalization of Sound

Digitalization is the process of turning the continuous vibrations of air into numbers that a machine can process. Think of it as translating the language of air pressure into the language of computers.

But computers do not understand: air, pressure, smooth motion. Computers understand Numbers.

So the question becomes: How do we turn smooth air vibrations into numbers? This is what digitalization does. Digitalization translates: Air pressure → Measurements → Numbers → Binary

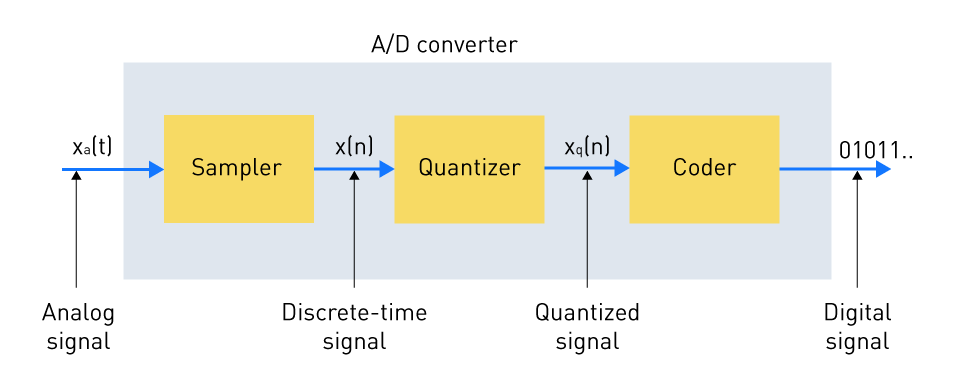

Digitizing sound involves three major transformations:

- Sampling: Capture sound over time

- Quantization: Round loudness values

- Encoding: Store as Binary

Let’s go step by Step.

Sampling

Real motion is continuous, If someone waves their hand, the motion flows smoothly.

But a video is NOT continuous motion. A video is many pictures taken quickly. Example: If you take 24 pictures per second and play them fast…

Your brain sees: smooth motion even though it's just snapshots.

So: Real motion → snapshots → video

Sound is Recorded the same way. Real sound is continuous air vibration

Air is moving smoothly all the time but a computer cannot capture every single moment, So instead… We take snapshots of air pressure just like a camera takes snapshots of motion.

Sound Sampling = Audio Snapshots

At tiny moments in time we ask: “How much is the air pushed right now?” So instead of storing the whole smooth wave… We store measurements. Like:

| Time | Air Pressure |

|---|---|

| t₁ | 0.3 |

| t₂ | 0.6 |

| t₃ | -0.2 |

| t₄ | -0.5 |

This becomes a list of values which describes the sound.

If we take 16,000 snapshots every second, just like: 24 frames/sec = video, here we do 16,000 samples/sec = audio

That means 16,000 Hz sampling rate which means that we check the air pressure 16,000 times every second. If we sample too slowly… We miss the shape of the vibration, just like how taking 1 photo per second cannot capture fast motion.

Nyquist Rule: This is the law that governs whether machines hear correctly… or hallucinate noise. It states that to capture a frequency, you must sample at least 2× that frequency.

Using video analogy: To capture motion smoothly, you must take at least 2 pictures per movement.

Same for sound, to capture a frequency F, you must sample at least 2F times per second. This is the Nyquist Rule

Human speech goes up to about 8,000 Hz, meaning air may vibrate 8,000 times per second. So to capture that properly, we must sample at least 2 × 8,000 = 16,000 samples/sec. That is why Speech systems use 16,000 Hz sampling rate.

If We Sample Too Slowly? Let’s say sound vibrates 8,000 times per second but we only sample 9,000 times per second we don’t capture enough detail. It’s like trying to film a fast fan with a slow camera. The motion looks wrong, this error is called Aliasing. Aliasing happens when sound is distorted, high-frequency sounds pretend to be lower ones so machines then misunderstand tone.

Quantization

Imagine we sampled sound at one moment and got Air pressure = 0.623748292. This is a very precise real-world value but computers cannot store infinite precision, they must round it.

So intead of storing 0.623748292 we might store 0.62, that rounding is Quantization. So now Smooth reality → stepped values

In real life, air pressure can take any value. But in digital systems, we only allow certain loudness levels.

Example: Few Loudness Steps (Low Bit Depth)

Let’s imagine our system only allows 4 loudness levels. So instead of storing any value between 0 and 10… We only allow:

| Step | Allowed Value |

|---|---|

| 1 | 0 |

| 2 | 3.3 |

| 3 | 6.6 |

| 4 | 10 |

Now suppose real sound arrives:

| Real Loudness | Stored Value |

|---|---|

| 1.2 | 0 |

| 2.9 | 3.3 |

| 5.0 | 6.6 |

| 7.1 | 6.6 |

| 9.6 | 10 |

Notice: Small differences disappear

Example: 5.1 and 6.4 both become 6.6, So the smooth waveform becomes jagged. This causes Robotic sound, Telephone-like quality, Loss of subtle details. This error is called Quantization Noise

Example in Real Situations

- Whisper Real loudness: 0.11 → 0.12 → 0.13. With few steps: All become: 0.1. The whisper disappears.

- Music Fade: Real fade-out: 3.0 → 2.8 → 2.6 → 2.4 → 2.2. Stored (with few levels): 3.3 → 3.3 → 3.3 → 3.3 → 0. Instead of fading smoothly… It suddenly drops. Sounds artificial.

- Subtle Tone Differences. Real tonal variation: 4.2 → 4.4 → 4.6 Stored with few steps: 4.2 → 4.2 → 4.2. Tone distinctions collapse, Machines may misinterpret meaning.

More Loudness Steps = Better Sound

Now imagine instead of 4 steps… We allow 65,536 steps (16-bit audio)Now:

| Real Loudness | Stored Value |

|---|---|

| 4.21 | 4.209 |

| 4.44 | 4.441 |

| 4.62 | 4.621 |

Almost identical. So the digital sound feels smooth again.

Bit Depth = Number of Loudness Steps

Bit depth controls how many steps exist:

| Bit Depth | Loudness Levels |

|---|---|

| 2-bit | 4 levels |

| 3-bit | 8 levels |

| 8-bit | 256 levels |

| 16-bit | 65,536 levels |

| 24-bit | 16+ million levels |

More levels = smaller rounding = more natural sound

Encoding: Turning sound into binary

After quantization, we now have something like this:

| Time | Quantized Loudness |

|---|---|

| t₁ | 2.3 |

| t₂ | 1.8 |

| t₃ | -0.6 |

| t₄ | -2.0 |

But computers still cannot store these directly. Computers only understand 0 and 1

So we must translate each loudness level into binary. This final translation is called Encoding.

- Step 1: Each Loudness Level Gets a Number

Remember: 16-bit gives us 65,536 possible loudness steps. So internally, the system labels them like:

| Loudness Step | Level Number |

|---|---|

| Lowest | 0 |

| Next | 1 |

| Next | 2 |

| ... | ... |

| Highest | 65,535 |

So instead of storing: "Air pressure = 0.62" We store: Level = 42,918

- Step 2: Convert That Number Into Binary

Now the computer translates: 42,918 → into 16-bit binary. Example: 42,918 = 1010011110100110

This is what actually gets stored. So the sound becomes:

| Time | Loudness Level | Binary Stored |

|---|---|---|

| t₁ | 42,918 | 1010011110100110 |

| t₂ | 31,220 | 0111101000100100 |

| t₃ | 12,004 | 0010111011010100 |

Now the sound is fully digital.

Full Pipeline Recap

Real Sound → Smooth vibration

- Sampling: Take snapshots in time, Like video frames

- Quantization: Round loudness, Like brightness levels

- Encoding: Store using 0s and 1s, Like saving pixels

Real Example: 16,000 Hz Audio

If we record speech: Sampling rate = 16,000 samples/sec, Bit depth = 16 bits

Each second becomes: 16,000 numbers. Each number uses: 16 binary digits. So 1 second of audio = 16,000 × 16 bits = 256,000 bits ≈ 32 KB per second. That’s why audio files have size. They are literally binary pressure snapshots.

Intuition

- Sampling answers: When do we measure sound?

- Quantization answers: How accurately do we measure loudness?

- Low quantization precision: Blocky sound

- High quantization precision: Smooth sound

“Sound begins as vibration, disturbs air pressure, travels as a wave, is interpreted by our ears and brain, and can be captured or reproduced digitally as numbers.”

Summary

- Sound is physical — vibrations cause air pressure waves

- Hearing is biological — ears and brain interpret these waves

- Digital audio is mathematical — computers store numbers representing past vibrations

- Understanding this chain makes working with Speech AI much more intuitive: you can reason about waveforms, frequency, amplitude, and artifacts instead of treating the signal as abstract numbers

Quick Links

- What is Sound: https://www.youtube.com/watch?v=gdGyvGPZ1G0

- Compression and Rarefacction: https://www.youtube.com/watch?v=bYoTRx6gGX0

- Interactive Visualization tool: https://academo.org/demos/virtual-oscilloscope/

- Wavelength, Frequency, Time Period and Amplitude: https://youtu.be/9VSHa1mKcTw?si=rxw7LO4qO7bQ9qCl