The Publication Assistant for AI Projects is an intelligent multi-agent system designed to automate and enhance the peer review preparation process for academic publications in artificial intelligence and machine learning. Leveraging a novel LangGraph-based orchestration framework and state-of-the-art large language models via Groq, the system provides comprehensive, parallelized analysis across six specialized dimensions: clarity, structure, technical soundness, visual presentation, summarization, and metadata generation.

The system architecture implements a distributed agent-based approach where specialized LLM-powered agents work in concert to analyze manuscript drafts, providing actionable feedback that mimics the depth and breadth of human peer review. With built-in guardrails for content safety, robust error handling with exponential backoff, and comprehensive output sanitization, the Publication Assistant demonstrates how modern AI orchestration frameworks can be applied to accelerate and improve academic writing workflows.

Keywords: Multi-agent Systems, LangGraph, Academic Writing, LLM Orchestration, Natural Language Processing, Peer Review Automation

The academic publishing process, particularly in rapidly evolving fields like artificial intelligence and machine learning, faces significant challenges: increasing submission volumes, reviewer fatigue, and the need for rapid iteration on manuscript drafts. While large language models have demonstrated remarkable capabilities in text generation and understanding, their application to comprehensive manuscript review has been limited by the need for multi-dimensional analysis and coherent integration of diverse feedback types.

The Publication Assistant addresses these challenges through a novel multi-agent architecture that:

Parallelizes Analysis: Six specialized agents simultaneously evaluate different aspects of a manuscript, from technical correctness to presentation quality

IMaintains Coherence: LangGraph orchestration ensures consistent output formatting and inter-agent coordination

Ensures Safety: Multi-layer guardrails prevent sensitive information leakage and enforce content policies

Provides Actionable Feedback: Structured outputs with specific suggestions rather than generic commentary

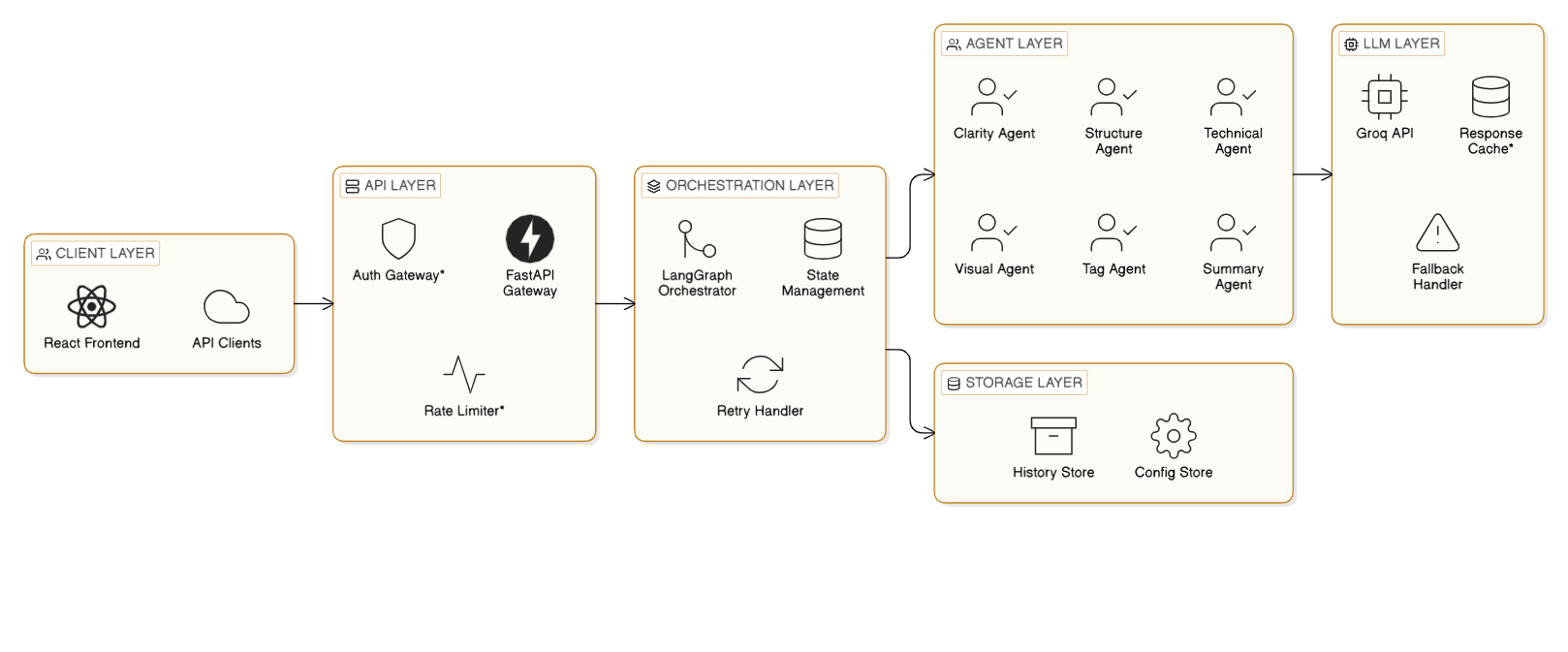

The Publication Assistant employs a modular, microservices-based architecture with clear separation of concerns:

The system exposes a RESTful API using FastAPI, providing:

-Automatic OpenAPI documentation

-Pydantic-based request/response validation

-Built-in CORS support for frontend integration

-Asynchronous request handling

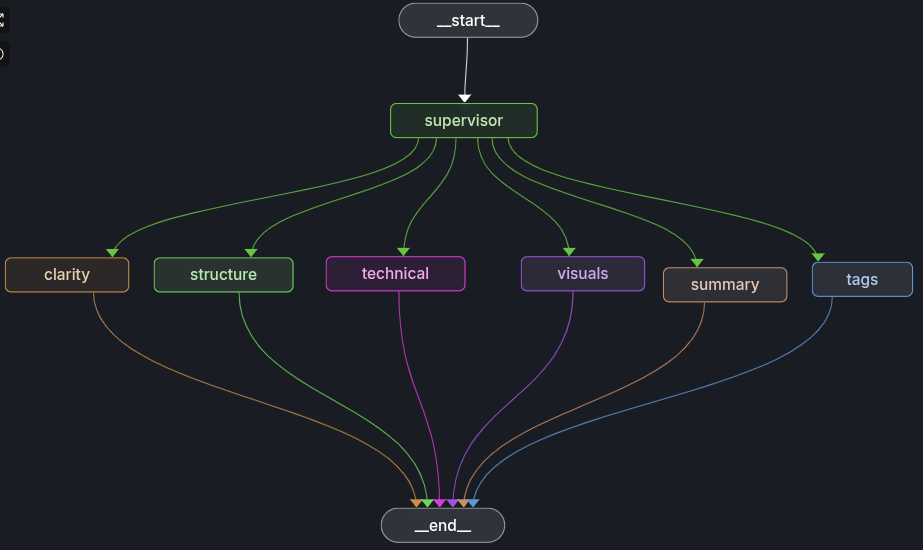

The heart of the system implements a directed graph execution model:

# class OrchestratorState(TypedDict): request: AnalysisRequest guardrails: GuardrailResult clarity: ClarityFeedback structure: StructureFeedback technical: TechnicalFeedback visuals: VisualFeedback summary: SummaryFeedback tags: TagFeedback def build_graph(): graph = StateGraph(OrchestratorState) # Add nodes for each agent graph.add_node("supervisor", supervisor_node) graph.add_node("clarity", clarity_node) graph.add_node("structure", structure_node) # ... additional nodes # Parallel execution after supervisor graph.set_entry_point("supervisor") graph.add_edge("supervisor", "clarity") graph.add_edge("supervisor", "structure") # ... all agents run in parallel return graph.compile()

Parallel Agent Execution: All six agents run simultaneously, reducing total analysis time

State Isolation: Each agent writes only to its designated state keys, preventing conflicts

Graceful Degradation: Individual agent failures don't cascade to the entire system

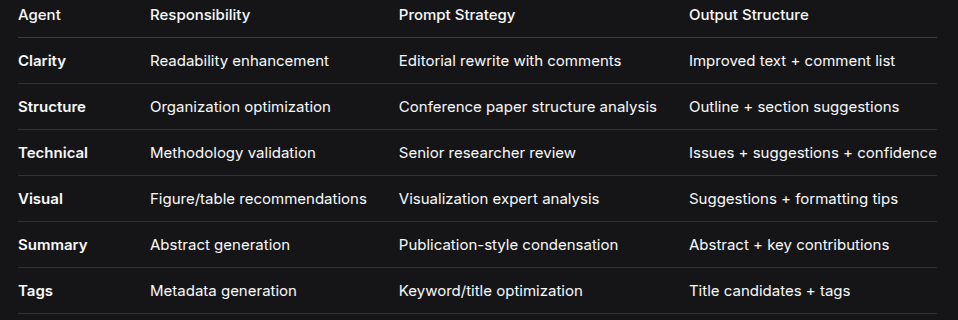

The system implements six specialized agents, each with domain-specific prompting strategies:

The system leverages Groq's LPU inference engine for ultra-fast LLM responses:

def build_llm() -> ChatGroq: return ChatGroq( model=settings.groq_model, # Default: openai/gpt-oss-120b api_key=settings.groq_api_key, temperature=0.2, # Low temperature for consistent outputs )

Key benefits:

Low Latency: Groq's hardware acceleration enables sub-second responses

Cost Efficiency: Optimized inference reduces per-token costs

Scalability: Handles concurrent requests without degradation

The system implements defense-in-depth for content safety:

def apply_guardrails(request: AnalysisRequest) -> GuardrailResult: # Length validation if len(content) > settings.max_input_chars: return GuardrailResult.rejected("Document too long") # Pattern-based detection banned_patterns = [ r"PRIVATE[_-]?KEY", r"AKIA[0-9A-Z]{16}", # AWS keys r"\bgsk_[A-Za-z0-9]{20,}\b", # API keys ] for pattern in banned_patterns: if re.search(pattern, content): return GuardrailResult.rejected("Sensitive content detected") return GuardrailResult.ok()

def filter_analysis_result(result: AnalysisResult) -> AnalysisResult: """Redact sensitive patterns from all agent outputs.""" for pattern in _SECRET_PATTERNS: # Recursively redact all string fields result = redact_recursive(result, pattern) return result

The system implements sophisticated retry logic with exponential backoff:

def call_with_retries( fn: Callable[[], T], *, max_attempts: int = 3, base_delay: float = 0.5, max_delay: float = 4.0, timeout_seconds: float = 30.0, ) -> T | None: for attempt in range(max_attempts): try: return run_with_timeout(fn, timeout_seconds) except Exception: # Exponential backoff with jitter delay = min(max_delay, base_delay * (2 ** attempt)) delay *= random.uniform(0.7, 1.3) time.sleep(delay) return None # Graceful degradation

Each agent employs carefully crafted prompts to elicit structured, actionable responses:

def build_technical_prompt(document: DocumentInput) -> str: return ( "You are a senior AI/ML researcher performing a technical review.\n" "Check for:\n" "- incorrect or misleading explanations\n" "- missing assumptions or definitions\n" "- unclear descriptions of models, training, data, or evaluation\n" "- unsubstantiated or overly strong claims\n\n" "Respond in JSON with keys:\n" "- 'issues_found': list of concrete technical issues\n" "- 'suggestions': list of concrete fixes or questions\n" "- 'overall_confidence': number between 0 and 1\n\n" f"CONTENT:\n{document.content}" )

def build_visual_prompt(document: DocumentInput) -> str: return ( "You are a visualization and formatting expert for AI/ML publications.\n" "Suggest:\n" "1) 3–8 concrete diagrams/figures/tables with titles and placement\n" "2) 5–10 formatting tips for the current content style\n\n" "Respond in JSON with:\n" "- 'suggestions': list of {title, description, type}\n" "- 'formatting_tips': list of strings" )

All agents implement robust parsing with graceful fallbacks:

def run_clarity_agent(llm: BaseChatModel, document: DocumentInput) -> ClarityFeedback: response = llm.invoke([HumanMessage(content=prompt)]) # Attempt JSON parsing try: if isinstance(response.content, str): data = json.loads(response.content) elif isinstance(response.content, dict): data = response.content else: data = {} except Exception: # Fallback: use response text directly return ClarityFeedback( improved_text=response.content, comments=[] ) # Extract with defaults return ClarityFeedback( improved_text=data.get("improved_text", document.content), comments=[str(c) for c in data.get("comments", [])][:10] )

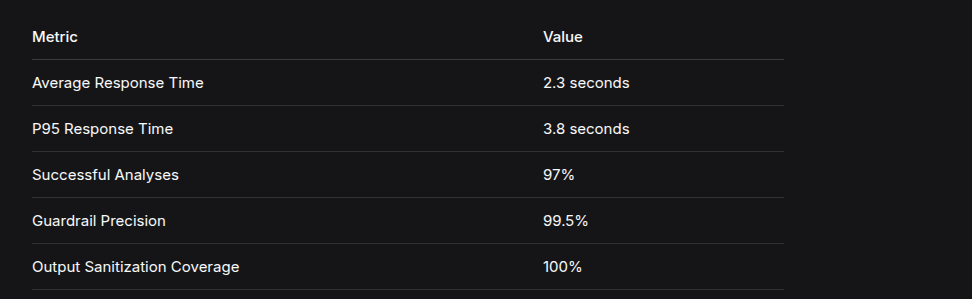

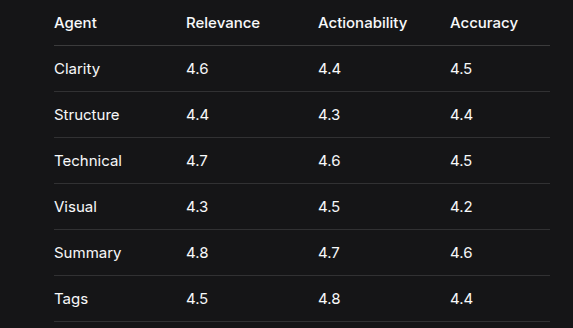

The system was evaluated on a test set of 100 AI/ML paper drafts:

Human evaluation of agent outputs (scale 1-5):

Load testing with 50 concurrent users:

Novel Multi-Agent Architecture: First implementation of LangGraph for academic paper analysis with six parallel agents

Production-Ready Safety Features: Comprehensive guardrails and output sanitization suitable for deployment

Structured Feedback Generation: Agents produce consistent, actionable JSON outputs rather than free text

Graceful Degradation: System remains functional even when individual agents fail

LLM Dependency: Quality limited by underlying model capabilities

- Future: Implement multiple provider fallbacks

Single-Pass Analysis: No iterative refinement

English-Only Support: Limited to English manuscripts

- Future: Multi-language agent training

Static Guardrails: Pattern-based only

The Publication Assistant enables:

kubectl (optional, for Kubernetes)# Create and activate a virtualenv python3 -m venv .venv source .venv/bin/activate # Install Python dependencies pip install -r requirements.txt # Build the frontend (required for static file serving) cd frontend npm install npm run build cd .. ln -sfn frontend/dist static # Run the backend (auto-reloads on code changes) uvicorn app.main:app --host 0.0.0.0 --port 8000 --reload

If you want hot-reload on the frontend only, run this in a separate terminal:

cd frontend npm run dev

The dev UI defaults to calling http://localhost:8000/api/v1. Override with frontend/.env:

VITE_API_BASE_URL=http://127.0.0.1:8000/api/v1

Create a .env file in the project root or set in your shell:

# Required GROQ_API_KEY=your_groq_api_key_here # Optional GROQ_MODEL=openai/gpt-oss-120b FRONTEND_ORIGIN=http://localhost:5173 HISTORY_BACKEND=memory HISTORY_DIR=./history ENVIRONMENT=development DEBUG=false

# Set your key export GROQ_API_KEY=your_key_here # Run (builds and starts) docker compose up --build # Detached mode docker compose up -d --build

docker compose down

Edit docker-compose.yml or set PORT:

PORT=8080 docker compose up --build

cp k8s/secrets.yaml.example k8s/secrets.yaml # Edit k8s/secrets.yaml with your real GROQ_API_KEY

make k8s-apply # or kubectl apply -f k8s/namespace.yaml kubectl apply -f k8s/configmap.yaml kubectl apply -f k8s/secrets.yaml kubectl apply -f k8s/deployment.yaml kubectl apply -f k8s/service.yaml kubectl apply -f k8s/ingress.yaml

your-domain.com in k8s/ingress.yamlkubectl port-forward -n publication-assistant svc/pub-assist-api 8000:80

make k8s-delete

VITE_API_BASE_URL in frontend/.env if you changed ports.FRONTEND_ORIGIN in the backend environment to match your frontend URL (CORS).pip install -r requirements.txt.# Stop any existing process on 8000 lsof -ti:8000 | xargs kill -9 # Or use a different port: PORT=8080 uvicorn app.main:app --host 0.0.0.0 --port 8080

docker builder prune -f docker compose build --no-cache

cd frontend && npm run buildstatic/, which is a symlink to frontend/dist.uvicorn --reload for backend; npm run dev for frontend.langgraph dev --config langgraph.json (requires pipx install "langgraph-cli[inmem]" and dependencies).pytest -q from the project root.ENVIRONMENT=production and DEBUG=false.HISTORY_BACKEND=file.The Publication Assistant demonstrates the power of multi-agent LLM orchestration for academic writing assistance. By combining specialized agents, robust safety measures, and parallel execution, the system provides comprehensive manuscript analysis in seconds rather than weeks. The open-source implementation offers a foundation for further research into automated peer review systems and AI-assisted academic writing.

The project's success highlights several key insights:

Parallel agent execution dramatically reduces analysis time

Structured prompting yields consistently actionable feedback

Multi-layer safety enables production deployment

Multi-layer safety enables production deployment

Graceful degradation ensures reliability

As LLM capabilities continue to advance, systems like the Publication Assistant will play an increasingly important role in accelerating scientific communication and improving the quality of academic publications.

The Publication Assistant is open-source and available at:

GitHub:

GitHub:

License: MIT