"The important thing is not what they think of me, but what I can do for them. Ask me anything about my life, my work, my failures — and I will answer only from what is true."

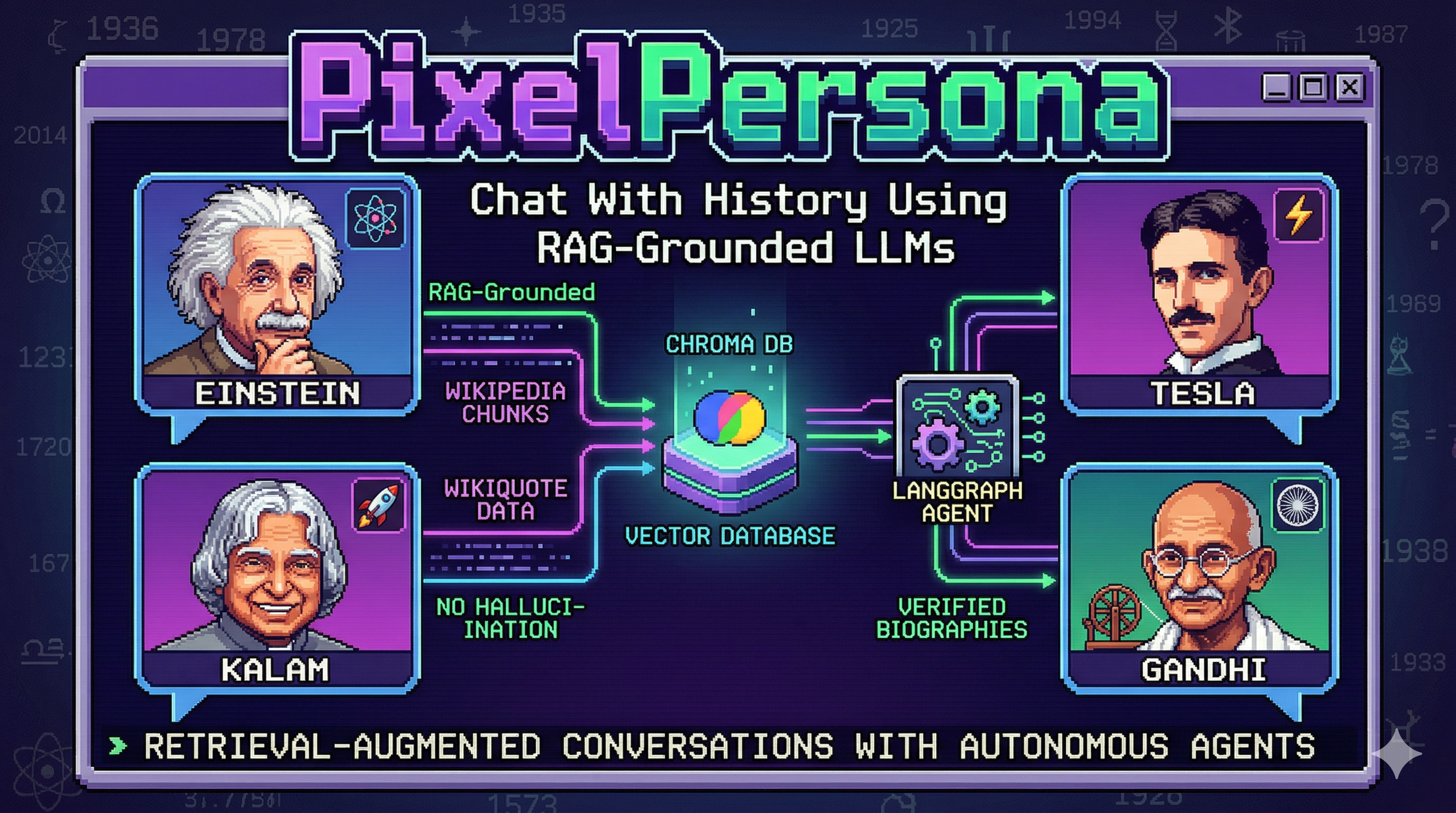

What if an AI could answer like Einstein, but only with facts pulled from verified biographical sources — Wikipedia articles and characteristic quotes? No hallucination. No improvisation. Just a retrieval-augmented conversation grounded in real data.

PixelPersona is a RAG-powered AI chat system where each persona — Einstein, Nikola Tesla, APJ Abdul Kalam, Mahatma Gandhi — is an autonomous LangGraph agent wired to a dedicated vector database. When you ask Gandhi about his philosophy of nonviolent resistance, the system doesn't guess. It retrieves chunks from Wikipedia articles and Wikiquote quotes, then generates a response grounded in that verified biographical context.

![]()

The browser-based UI with a pixel-art styled chat interface that connects to the FastAPI backend over HTTP. Users select a persona from a visual grid, type a message, and receive a typewriter-animated response. The frontend is intentionally lightweight — it handles presentation and animation only, delegating all intelligence to the backend. Built with AI assistance, it serves as the playable layer over a serious backend system.

The core challenge is architectural: how do you take publicly scraped data from Wikipedia and Wikiquote, chunk it intelligently, embed it locally, store it in a vector database, and then retrieve only the most relevant context — at query time — before passing it to an LLM that generates a persona-authentic response?

The pipeline runs in five stages:

graph LR %% Stage grouping subgraph S1["Stage 1: Scraping"] A["Wikipedia Scraper"] B["Wikiquote Scraper"] end subgraph S2["Stage 2: Validation"] C["Data Validator"] end subgraph S3["Stage 3: Chunking & Embedding"] D["Chunker"] E["Embedder"] end subgraph S4["Stage 4: Storage"] F[("Chroma DB\nPer-Persona Collections")] end subgraph S5["Stage 5: Retrieval"] G["User Query"] H["Query Rephraser"] I["Retriever"] end %% Ingestion flow A --> C B --> C C --> D D --> E E --> F %% Retrieval flow G --> H H --> I I -->|search| F F -->|top-k chunks| I %% Styling classDef stage1 fill:#e3f2fd,stroke:#1e88e5,color:#0d47a1 classDef stage2 fill:#fce4ec,stroke:#d81b60,color:#880e4f classDef stage3 fill:#ede7f6,stroke:#5e35b1,color:#311b92 classDef stage4 fill:#fff3e0,stroke:#ef6c00,color:#e65100 classDef stage5 fill:#e8f5e9,stroke:#43a047,color:#1b5e20 class A,B stage1 class C stage2 class D,E stage3 class F stage4 class G,H,I stage5

Each stage has independent logic and its own failure modes. Here's how

every piece fits together.

Two scrapers, one per data source:

wikipedia_scraper.py)Uses the wikipediaapi library to fetch full article content with section hierarchy preserved. Each section gets tagged with its source type and URL.

WikipediaScraper.scrape_persona(persona_name: str) → List[Document]

The scraper walks the article section by section. Why? Because chunks later need to know not just what the content is, but where it came from. The metadata source_url and section fields are set at scrape time and travel all the way through the pipeline to the retrieval result.

wikiquote_scraper.py)Uses the Wikiquote REST API (action=parse§ion=1) to extract the Quotes section — avoiding overlap with Wikipedia content. A person can have thousands of quotes on Wikiquote, so this scraper also handles pagination.

WikiquoteScraper.scrape_quotes(persona_name: str) → List[Document]

A deliberate design decision: Wikiquote content goes into a separate collection of chunks from Wikipedia. At retrieval time, both sources are available, so responses can draw on both biographical facts (Wikipedia) and characteristic phrasing (Wikiquote quotes).

Before any content enters the vector database, it passes through DataValidator (processing/validator.py):

DataValidator.validate_content(content: str) → None raises ValidationError if: - content has < 10 words - content has > 50% non-printable characters

This is intentionally lightweight. We're not validating factual accuracy (we trust Wikipedia and Wikiquote as sources) — we're filtering scrape artifacts: HTML entities that survived decoding, empty pages, binary noise. The ValidationError exception propagates up to the ingestion script which logs and skips the problematic document.

processing/chunker.py)PersonaChunker wraps LangChain's RecursiveCharacterTextSplitter with a custom separator list:

separators = ["\n\n", "\n", ". ", " ", ""]

This means: try to split on double newlines first (paragraph boundaries), then single newlines, then sentence boundaries, then words, and finally characters if nothing else worked. The goal is chunks that represent coherent ideas — not arbitrary 3000-character cuts.

The chunk size is 3000 characters (configured in config.py as CHUNK_SIZE). With an average English word length of 5 characters plus spaces, plus the natural separators, this yields roughly 400–800 words per chunk — within the project specification.

PersonaChunker.chunk_documents(documents, metadata_updates) → List[Document]

Each chunk inherits the parent document's metadata and is tagged with the persona name. This is critical: it ensures that at retrieval time, we can unambiguously attribute every chunk to a specific persona.

processing/embedder.py)LocalEmbedder loads the BAAI/bge-small-en-v1.5 model — a 24M parameter sentence transformer that produces 384-dimensional embeddings. The key configuration:

encode_kwargs = {"normalize_embeddings": True}

This L2-normalizes every embedding, which means a simple dot product between two embedding vectors equals their cosine similarity. Chroma's default similarity metric (cosine) maps directly to this — no custom distance function needed.

The embedder is consumed by Chroma's from_documents() factory:

Chroma.from_documents( documents=chunks, embedding=LocalEmbedder(), persist_directory=f"./chroma_data/{collection_name}" )

ChromaCollectionManager (storage/chroma_client.py) implements a lazy-loading factory pattern. When you first request a persona's store:

manager.get_store("Albert_Einstein")

...it checks the _stores cache. If empty, it creates a new langchain_chroma.Chroma instance persisted at chroma_data/Albert_Einstein/. Subsequent requests for the same persona return the cached instance — no redundant disk reads.

Design decision: separate Chroma collections per persona (not metadata filtering on a single collection). The rationale:

The trade-off is operational complexity at scale (hundreds of personas), but for V1 with four personas, this is the right call.

retrieval/rephraser.py)Before a user query hits the vector store, it passes through QueryRephraser.rephrase():

Original: "What did Einstein do?"

Rephrased: "What did Einstein contribute to physics?"

The rephraser uses llama-3.1-8b-instant via GroqCloud — a lightweight, fast model. Temperature is set to 0.3 (almost deterministic) with a 100-token cap. The system prompt is explicit: return only the rephrased query, nothing else. This is important because the persona agent's response must not include the rephraser's output — it silently transforms the query before embedding.

Why rephrase? A user asking "What was Einstein's biggest mistake?" may not phrase it as "Einstein's conceptual error" or "Einstein wrong about cosmology." Query expansion bridges this vocabulary gap.

retrieval/retriever.py)The retrieve() method orchestrates the full retrieval pipeline:

async def retrieve(persona_name: str, query: str, top_k: int = 5) → List[Dict[str, Any]] # [{"content": ..., "metadata": ...}]

QueryRephraser)ChromaCollectionManager)similarity_search with the rephrased queryThe method is async — but Chroma's underlying similarity_search is sync. This is a deliberate design constraint: Chroma's Python SDK does not yet expose a native async interface, so we wrap synchronously. The entire retrieval pipeline sits behind an async interface so that when LangGraph adds async tool support, this drops in without changes.

PersonaAgent (agents/persona_agent.py) is the core orchestration layer. Each persona gets its own LangGraph ReAct agent:

agent = create_agent( llm=ChatGroq( model="openai/gpt-oss-20b", # GroqCloud temperature=0.7, max_tokens=500 ), tools=[retrieve_context], checkpointer=InMemorySaver() )

The checkpointer is InMemorySaver — no persistence of conversation state per V1 scope. The agent is lazy-initialized: it doesn't load into memory until the persona's first chat request.

retrieve_context ToolThe single tool the agent has access to:

@tool(response_format="content") def retrieve_context(query: str) → str: """Retrieves biographical context about the current persona."""

The tool is async. This was a deliberate fix: an earlier implementation used a synchronous wrapper that called asyncio.run() on every tool invocation, creating a new event loop on each RAG call — expensive and wrong. The tool is now async def and called with await self.retriever.retrieve(...) directly, with no event loop overhead.

A critical commit in the project's history: "fix: only call RAG for personal questions, answer generic questions directly."

The system prompt explicitly instructs the agent:

CRITICAL - When to use the retrieval tool:

- Use retrieve_context ONLY for personal/biographical questions

- For ALL OTHER questions - respond directly without retrieval

Ask Einstein "Explain quantum entanglement" → direct LLM answer (general physics knowledge, no retrieval needed).

Ask Einstein "What was your relationship with your son like?" → retrieval call → context from biographical sources → grounded response.

This is a meaningful design decision. RAG is expensive (latency + API calls). Using it only for biographical queries is both technically correct (we want grounded responses about a persona's life, not general knowledge) and architecturally sound (avoids polluting general answers with possibly irrelevant context).

LangGraph's SummarizationMiddleware is attached to the agent:

SummarizationMiddleware( model=ChatGroq(model="llama-3.1-8b-instant", ...), trigger=("tokens", 2500), # Triggers when context exceeds 2500 tokens keep=("messages", 10) # Keeps last 10 messages before summarizing )

When a conversation grows beyond ~2500 tokens in context, LangGraph automatically summarizes the oldest messages into a compact digest. This prevents the context window from exploding during long conversations — a real problem for V1's in-memory checkpointer. The agent's response is also cleaned: a regex strips any "PersonaName:" or "PersonaName " prefix that the LLM sometimes prepends to its output.

routes.py exposes three endpoints:

| Endpoint | Method | Description |

|---|---|---|

/health | GET | {"status": "ok"} |

/personas | GET | {"personas": {...}} |

/chat | POST | ChatRequest → ChatResponse |

No streaming endpoint. The non-streaming /chat endpoint was a deliberate architectural choice. SSE streaming was attempted in earlier iterations but produced buffered, chunked HTTP responses rather than true token-by-token streaming — defeating the purpose. The final design uses a non-streaming POST, and the frontend handles its own character-by-character typewriter animation on the complete response.

CORS is handled by a single CORSMiddleware instance (a prior duplicate custom middleware was removed — the built-in middleware handles everything including OPTIONS preflight correctly).

class ChatResponse(BaseModel): persona_name: str response: str

No streaming, no tokens, no metadata. Minimal surface area.

models/persona.py defines the core domain model:

class SourceType(Enum): WIKIPEDIA = "wikipedia" WIKIQUOTE = "wikiquote" @dataclass class Persona: name: str description: str @dataclass class PersonaChunk: content: str source_type: SourceType source_url: str section: str persona: str metadata: Optional[dict] = None

The V1 personas are defined in a registry:

AVAILABLE_PERSONAS = { "Albert Einstein": "German-born theoretical physicist and philosopher of science", "Nikola Tesla": "Inventor and electrical engineer known for AC power systems", "APJ Abdul Kalam": "Aerospace scientist and 11th President of India", "Mahatma Gandhi": "Leader of Indian independence movement and philosopher" }

Adding a new persona means: (1) add them to the registry, (2) run the ingestion script, (3) done. No code changes outside the data layer.

config.py uses python-dotenv to pull settings from a .env file:

| Setting | Value |

|---|---|

EMBEDDING_MODEL | BAAI/bge-small-en-v1.5 |

CHUNK_SIZE | 3000 (characters) |

CHUNK_OVERLAP | 300 (characters) |

TOP_K_CHUNKS | 5 |

GPT_OSS_MODEL | openai/gpt-oss-20b |

REPHRASER_MODEL | llama-3.1-8b-instant |

CHROMA_PERSIST_DIR | ./chroma_data |

No hardcoded values. API keys come from environment variables. The one remaining gap: GROQ_API_KEY is not validated at startup — a missing key surfaces as a cryptic LangChain error at request time rather than during application boot.

scripts/ingest_persona.py is the CLI entry point for the full pipeline:

python scripts/ingest_persona.py --persona "Albert Einstein"

Loads raw scraped data from data/raw/{persona}/, runs it through the full pipeline (validate → chunk → embed → store), and exits. No daemon, no service — a stateless script that populates Chroma.

The data/raw/ directory contains JSON files from both the Wikipedia scraper and Wikiquote scraper. For V1, this is the complete dataset.

graph LR %% Layout groups subgraph CLIENT["Client Layer"] A["Browser"] end subgraph BACKEND["Application Layer"] B["FastAPI API"] C["Persona Agent\nLangGraph + LLM"] end subgraph RAG["RAG Pipeline"] D["Retriever"] E[("Chroma DB")] end %% Flow A -->|POST /chat| B B --> C C -->|fetch context| D D -->|search| E E -->|top-k results| D D --> C C --> B B --> A["Frontend"] %% Styling classDef client fill:#e3f2fd,stroke:#1e88e5,color:#0d47a1 classDef backend fill:#f3e5f5,stroke:#8e24aa,color:#4a148c classDef rag fill:#e8f5e9,stroke:#43a047,color:#1b5e20 class A client class B,C backend class D,E rag

| Operation | Latency |

|---|---|

| Embedding (per chunk) | ~0.3s (CPU, BAAI/bge-small-en-v1.5) |

| Retrieval (top-5) | ~50–150ms (Chroma, cosine similarity) |

| LLM call | ~800ms–1.5s via GroqCloud (openai/gpt-oss-20b) |

| Total E2E | ~1.2–2s from query to first character |

The system is not optimized for latency — it's optimized for correctness.

Every architectural decision (rephraser, selective RAG, summarization

middleware) trades some latency for better context quality.

Most AI chat projects connect an LLM directly to a frontend. PixelPersona is different because:

Retrieval is a first-class citizen. Most projects bolt on a vector DB as an afterthought. Here, the retrieval pipeline — rephraser, top-k tuning, persona-specific collections — gets as much architectural attention as the agent itself.

The agent knows when NOT to retrieve. Selective RAG (only for biographical questions) is a design pattern most projects skip. It's the difference between a smart system and one that blindly stuffs context into every prompt.

Local embeddings, no API dependency for retrieval. BAAI/bge-small-en-v1.5 runs entirely on CPU. The embedding + retrieval pipeline costs nothing per query in API credits — only the LLM call does.

Challenge: Chroma SDK is synchronous by default. The async wrapper around Chroma is a thin shim — it doesn't actually make Chroma async. A future migration to a truly async vector store (Qdrant,

Milvus with async clients) would eliminate this abstraction leak.

Challenge: In-memory checkpointer doesn't survive restarts. Conversation history is lost on server restart. V2 should add a proper persistence layer (SQLite or Redis) for conversation threads.

Trade-off: Local embedding model quality vs. speed. BAAI/bge-small-en-v1.5 is small (24M params) and fast, but semantic retrieval quality is bounded by its capacity. For niche historical queries (a specific quote from a lesser-known Tesla letter), a larger embedding model may significantly improve retrieval accuracy at the cost of embedding latency.

Conversation memory vectors — Store prior exchange embeddings so the agent can reference what was discussed earlier in a session.

Multi-persona interactions — What happens when you put Einstein and Tesla in the same conversation? A multi-agent orchestration layer with persona-to-persona retrieval.

Larger embedding model — Upgrade to bge-base or bge-large for improved retrieval accuracy on specialized queries.

Hybrid retrieval — Combine dense embeddings (semantic similarity) with BM25 sparse retrieval (keyword matching) for queries that rely on specific terminology.

PixelPersona is a portfolio project demonstrating real-world GenAI engineering: RAG pipeline design, LangGraph agent orchestration, local embedding models, and FastAPI-backed AI product development. Every component is built to be understood, not just to work.