PedalBot v2: From RAG Assistant to Agentic AI for Guitarists

Tags: agentic-ai · langgraph · RAG · guitar-effect-pedals · deployment · chatbot · multi-agent

Project Description

In v1, PedalBot was a Retrieval-Augmented Generation (RAG) assistant that could answer questions about guitar pedal manuals with cited sources. It did one thing well: retrieve and answer.

PedalBot v2 is a fully agentic upgrade. The system now decides how to answer a question — not just what to say. A LangGraph-powered multi-agent graph classifies the user's intent, dispatches to specialist agents (or runs them in parallel), validates the answer for hallucinations, and synthesises a final response. It also supports multi-turn conversations, real-time market pricing from Reverb.com, typo-tolerant query preprocessing, and a production-grade asynchronous ingestion pipeline backed by MongoDB GridFS, Celery, and Redis.

The result is a guitar gear assistant that can simultaneously tell you how a pedal works and what it costs on the used market — in a single conversational exchange.

What's New in v2 (vs. v1)

| Feature | v1 | v2 |

|---|---|---|

| Intelligence model | Linear RAG pipeline | LangGraph multi-agent graph |

| Intent awareness | None — every query hit the vector store | Router agent classifies into 5 intents |

| Pricing | None | Live Reverb.com data via PricingAgent |

| Hybrid queries | Not supported | Manual + Pricing agents run in parallel |

| Multi-turn conversation | Not supported | Conversation history persisted in MongoDB |

| Quality control | Prompt guardrails only | Dedicated QualityCheckAgent with confidence scoring |

| Storage | Disk-based PDFs | MongoDB GridFS (shared across services) |

| Background processing | Synchronous | Celery + Redis async workers |

| Deployment | Render + Streamlit Cloud | Railway (multi-service: API · Worker · Frontend) |

| Observability | None | LangSmith tracing |

Core Architecture

┌─────────────────────────────────────────────┐

│ Streamlit Frontend │

│ Home · Chat · Upload · Library · Settings │

└──────────────────┬──────────────────────────┘

│ HTTP (REST)

┌──────────────────▼──────────────────────────┐

│ FastAPI Backend │

│ │

│ ┌─────────────────────────────────────┐ │

│ │ LangGraph Agent Graph │ │

│ │ │ │

│ │ Router → Manual Agent ──────────────────▶ Pinecone (RAG)

│ │ → Pricing Agent ─────────────────▶ Reverb API

│ │ → Hybrid Agent (parallel) │ │

│ │ → Quality Check │ │

│ │ → Synthesizer │ │

│ └─────────────────────────────────────┘ │

│ │

│ ┌──────────┐ ┌──────────────────────┐ │

│ │ Ingest │──▶│ Celery Worker │ │

│ │ Router │ │ (PDF → chunks → │ │

│ └──────────┘ │ embeddings → index)│ │

│ └──────────────────────┘ │

└────────────┬────────────────────────────────┘

│

┌─────────┼─────────┐

▼ ▼ ▼

MongoDB Pinecone Redis

(manuals) (vectors) (broker/cache)

Intent Routing

Every query is classified into one of five intents before any agent runs:

| Intent | Example Query | Agents Dispatched |

|---|---|---|

MANUAL_QUESTION | "How do I save a preset?" | ManualAgent → Pinecone RAG |

PRICING | "What does a Boss GT-1000 sell for?" | PricingAgent → Reverb API |

EXPLANATION | "What is a compressor pedal?" | ManualAgent (fallback) |

HYBRID | "What reverb modes does it have and what's it worth?" | ManualAgent + PricingAgent in parallel |

CASUAL | "Hey, thanks!" | Synthesizer directly (no retrieval) |

The LangGraph Agent Graph

The agent graph is the heart of PedalBot v2. It was designed with one principle: every query should take exactly the path it deserves — no more, no less.

Nodes

RouterAgent — Uses llama-3.1-8b-instant (Groq) to classify intent. It also handles casual chitchat directly, avoiding unnecessary retrieval for greetings or off-topic messages.

ManualAgent — Runs semantic search against Pinecone using VoyageAI embeddings (voyage-3.5-lite). Retrieves the top-k most relevant chunks from the indexed manual and passes them to llama-3.3-70b-versatile to generate a grounded, cited answer.

PricingAgent — Fetches live listing data from the Reverb.com API, computes average, minimum, and maximum prices across active listings, and formats a market summary.

HybridAgent — Runs ManualAgent and PricingAgent concurrently using asyncio.gather(). Results are merged intelligently: if one agent fails, the other's result is still returned (partial success). Confidence scores reflect whether both, one, or neither agent succeeded.

QualityCheckAgent — Validates the draft answer using a separate LLM call. Flags hallucinations, low-relevance responses, ambiguous queries, and data-missing scenarios. Uses a typed FallbackReason enum to route to an appropriate fallback message rather than a generic error.

Synthesizer — Formats the final response from the validated raw_answer.

Fallback — Generates a context-aware fallback message based on the FallbackReason (e.g., "This concept may exist but isn't explicitly documented in the manual").

Conditional Routing

After the router, a conditional edge dispatches to the correct node. After quality check, a second conditional edge either approves the answer (→ synthesizer) or triggers fallback. This keeps the graph readable and easy to extend.

PDF Ingestion Pipeline

When a manual is uploaded:

- Upload — PDF is accepted by the FastAPI ingest router and stored in MongoDB GridFS (ensuring it's accessible to both the API and the Celery worker, even across separate containers).

- Queue — A Celery task is dispatched to Redis. The API returns immediately with a task ID. No user-facing wait.

- Extract — The Celery worker retrieves the PDF from GridFS. Text is extracted with

pdfplumber. If extraction quality is below a 0.3 confidence threshold (common in scanned PDFs), Google Cloud Vision OCR is automatically triggered. - Chunk — Text is split into ~300-token chunks with 100-token overlap to preserve context across boundaries.

- Embed — Each chunk is embedded with VoyageAI

voyage-3.5-lite. - Index — Vectors are upserted to Pinecone under a unique namespace per manual, keeping each pedal's knowledge isolated.

- Register — The pedal name and namespace are cached in

PedalRegistryfor fast, typo-tolerant lookups at query time.

Progress is queryable at /api/ingest/status/{manual_id}.

Query Preprocessing

Before any agent receives a query, the QueryPreprocessor normalises it:

- Typo correction — Catches and corrects misspelled pedal names (e.g. "Boss DD-5OO" → "Boss DD-500").

- Multi-question detection — Splits compound queries into sub-questions for structured handling.

- Query normalisation — Standardises casing and formatting before embedding, improving retrieval precision.

This was added after observing production failures where pedal names with minor typos returned zero results from the registry lookup.

Multi-Turn Conversation

PedalBot v2 maintains conversation history within a session. Previous messages are passed as context to the LLM, enabling follow-up questions like "What about its reverb types?" without the user re-specifying the pedal. Conversations are keyed by a conversation_id linked to MongoDB.

Technical Stack

| Layer | Technology | Why |

|---|---|---|

| Frontend | Streamlit | Rapid prototyping; built-in state management for chat |

| Backend API | FastAPI | Async-native; ideal for I/O-heavy agent calls |

| Agent Orchestration | LangGraph | Graph-based control flow; conditional routing; easy to extend |

| LLM (routing + QA) | Groq — llama-3.1-8b-instant | Fast inference for classification and validation tasks |

| LLM (answers) | Groq — llama-3.3-70b-versatile | Stronger reasoning for complex manual Q&A |

| Embeddings | VoyageAI — voyage-3.5-lite | Strong semantic retrieval; cost-effective at scale |

| Vector DB | Pinecone | Managed, low-latency cosine similarity search |

| Database | MongoDB Atlas (Motor async) | Stores manual metadata, job records, conversation history |

| PDF Storage | MongoDB GridFS | Enables cross-service file access without shared volumes or S3 |

| Background Workers | Celery + Redis | Non-blocking ingestion; scales independently from API |

| OCR | Google Cloud Vision API | Reliable extraction from scanned PDFs |

| Pricing Data | Reverb.com API | Real-time used-gear market data |

| Observability | LangSmith | Full trace visibility into agent decisions and LLM calls |

| Deployment | Railway | Multi-service deployment (API · Worker · Frontend) with managed add-ons |

Model Selection Rationale

LLM — Groq (llama-3.1-8b-instant for routing/QA, llama-3.3-70b-versatile for answers):

Two models are used intentionally. The 8b model is fast and cheap — perfect for the router (intent classification) and quality check (binary pass/fail judgement). The 70b model is reserved for the manual agent where reasoning quality directly affects answer accuracy. This split keeps costs manageable while delivering quality where it matters.

Embeddings — VoyageAI (voyage-3.5-lite):

Selected for its strong balance of retrieval quality and cost. The voyage-3.5-lite model produces 1024-dimensional embeddings with excellent semantic understanding of technical documentation, making it well-suited for dense pedal manuals with niche terminology.

Vector Database — Pinecone:

Pinecone's namespace feature is central to PedalBot's design — each manual is isolated in its own namespace, so queries are always scoped to the correct pedal without interference from other manuals. It also provides managed infrastructure with no index tuning required.

OCR — Google Cloud Vision API:

Scanned pedal manuals are common, especially for vintage gear. Google Vision handles varied layouts, small fonts, and diagram-heavy pages reliably. The 0.3 confidence threshold for OCR fallback was determined empirically from testing against a range of PDF types.

Background Jobs — Celery + Redis:

PDF ingestion involves multiple slow steps (OCR, embedding, vector upsert). Running these synchronously would block the API. Celery decouples ingestion from the API, enabling the system to handle concurrent uploads without degradation. Redis serves as both the message broker and a caching layer for the PedalRegistry.

PDF Storage — MongoDB GridFS:

When deploying across multiple Railway services (API + Celery worker), shared disk volumes add operational complexity. GridFS stores PDFs in MongoDB, making them accessible to any service with a database connection — no infrastructure-level file sharing required.

Deployment

PedalBot v2 is deployed on Railway with three services:

| Service | Dockerfile | Purpose |

|---|---|---|

api | Dockerfile | FastAPI backend |

worker | Dockerfile.celery | Celery ingestion worker |

frontend | (Streamlit) | Streamlit UI |

MongoDB and Redis are provisioned as Railway add-ons. Environment variables are validated on boot using Pydantic Settings — the app exits immediately if required variables are missing, preventing silent misconfiguration in production.

Deployment Links:

- Backend (FastAPI): pedalbot-api-production.up.railway.app

- Frontend (Streamlit): pedalbot-v2.streamlit.app

Repository: github.com/EbenTheGreat/pedalbot-v2

Setup (Local Development)

git clone https://github.com/EbenTheGreat/pedalbot-v2.git cd pedalbot-v2 python -m venv .venv && source .venv/bin/activate pip install -r requirements.txt # Terminal 1 — FastAPI backend uvicorn backend.main:app --reload --port 8000 # Terminal 2 — Celery worker celery -A backend.workers.celery_app worker --loglevel=info # Terminal 3 — Streamlit frontend cd frontend && streamlit run Home.py

Open http://localhost:8501 in your browser.

Note: If Redis is unavailable, ingestion falls back to inline processing — no Celery needed for local testing.

Required environment variables:

MONGODB_URI

PINECONE_API_KEY

GROQ_API_KEY

VOYAGEAI_API_KEY

JWT_SECRET_KEY

REDIS_URL

REVERB_API_KEY

GOOGLE_VISION_CREDENTIALS # Base64-encoded service account JSON

LANGSMITH_API_KEY

Lessons Learned

1. Graph-based orchestration beats if-else routing.

LangGraph's conditional edges made it straightforward to handle the casual intent (skip all retrieval), hybrid intent (parallelise two agents), and fallback paths within a single readable graph rather than a tangle of conditionals.

2. GridFS solved the cross-service file access problem cleanly.

Shared volumes between Railway services are fragile. Storing PDFs in GridFS meant any service — API, Celery worker, or future microservice — could access uploaded files with nothing more than a MongoDB connection string.

3. Two-model LLM design is worth the added complexity.

Using a fast 8b model for routing and validation, and a 70b model only for answer generation, reduced per-query costs significantly without any measurable drop in user-facing quality.

4. Typo tolerance is non-negotiable at the registry lookup stage.

Before adding QueryPreprocessor, minor pedal name typos caused complete retrieval failures — the registry lookup returned nothing, so the agents had no namespace to search. Normalising queries before lookup eliminated this class of bugs entirely.

5. LangSmith changed how I debug.

Seeing the full agent trace — routing decision, retrieved chunks, quality check verdict — in a single timeline made debugging agent behaviour orders of magnitude faster than print statements.

Future Improvements

- Tone Architect — "I want to sound like David Gilmour on Comfortably Numb. Here are my pedals. What are the settings?" Using RAG-extracted settings suggestions combined with tone descriptors.

- Rig Doctor — Analyse a guitarist's signal chain for common ordering mistakes (e.g., fuzz after a buffered bypass pedal) and power supply issues.

- Visual Manuals — Serve cropped diagram images alongside text answers, using bounding boxes stored during OCR to locate and display the relevant figure.

- Price Alerts — "Notify me when a Boss DM-2 drops below $150." The Celery Beat scheduler and Resend email worker are already in place; this is a UI + worker task.

- HTML / TXT document support — Extend ingestion beyond PDFs.

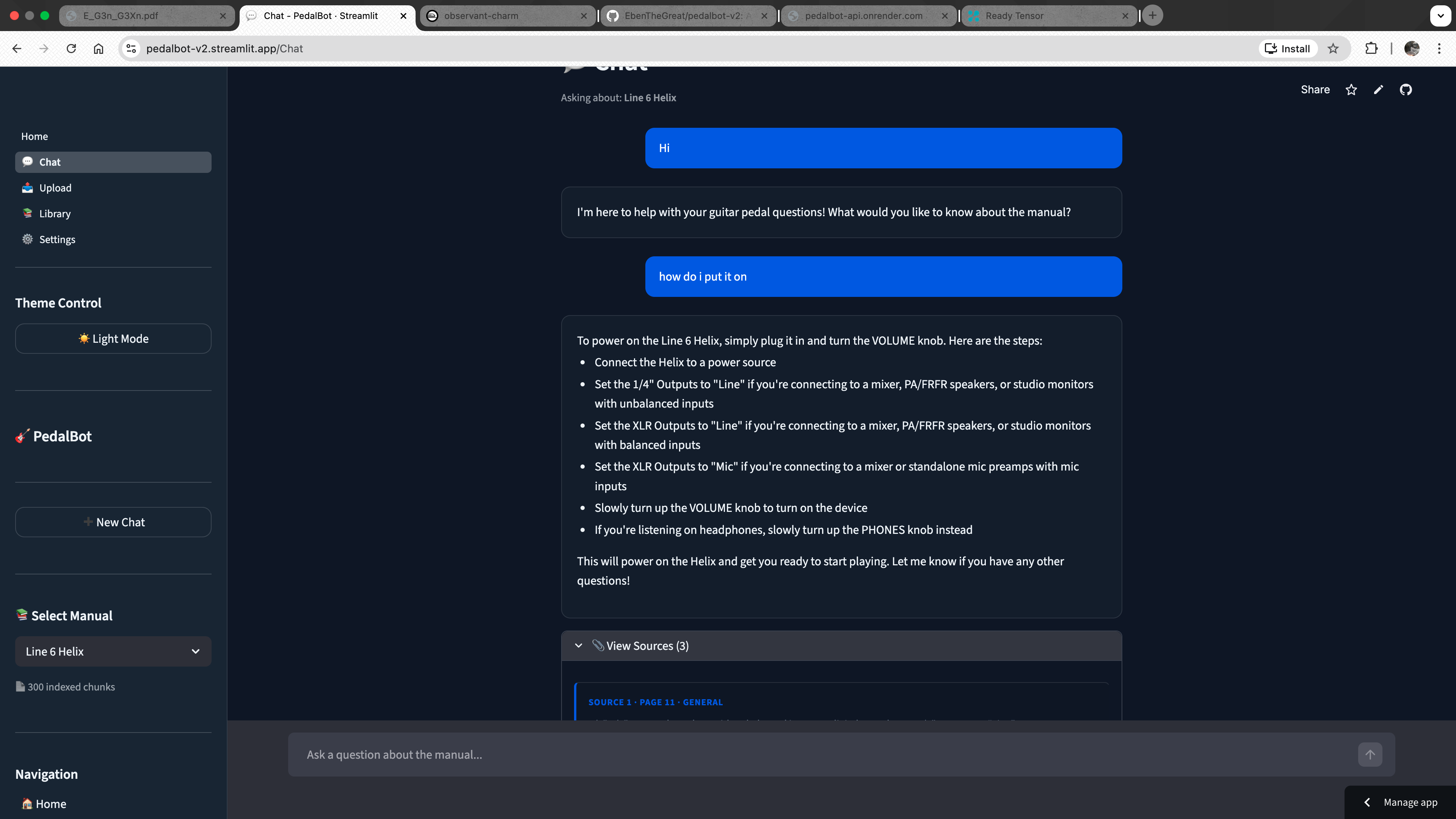

Screenshot

Summary

PedalBot v2 is a production-grade agentic AI application that evolved from a single-pipeline RAG tool into a multi-agent system capable of reasoning about the right course of action before acting. By combining LangGraph orchestration, intent-aware routing, parallel agent execution, hallucination detection, and a robust asynchronous ingestion pipeline, it delivers accurate, grounded, multi-modal answers to guitarists' questions — with full source citations and real-time market pricing in a single conversational exchange.