This project demonstrates how fundamental neural network models such as linear regression, logistic regression, and multilayer perceptrons (MLPs) can be built from scratch using PyTorch. It focuses on learning foundational concepts in deep learning by implementing and experimenting with each component manually, without relying on high-level libraries. The project uses synthetic data and the MNIST dataset for regression and classification tasks, emphasizing model design, training dynamics, and performance evaluation.

Neural networks are at the core of modern machine learning, powering tasks from image recognition to language understanding. While libraries like TensorFlow and PyTorch offer powerful abstractions, building models from scratch provides deeper insight into core mechanics such as forward propagation, loss computation, and optimization.

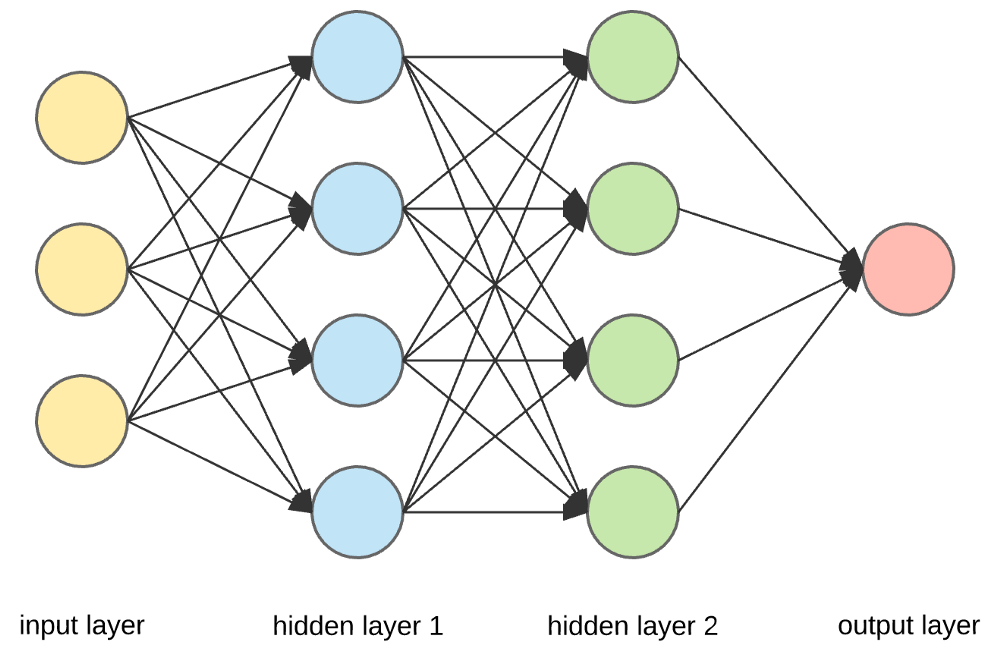

In this notebook, we reimplement classic models—linear regression, logistic regression, and multilayer perceptrons—using PyTorch's tensor operations, targeting two datasets:

This step-by-step approach aims to reinforce practical understanding of how neural networks work under the hood.

The project is divided into three key sections:

y = Xw + b + εX is input data, w is the weight vector, b is bias, and ε is random noise.For each model, training includes:

| Model | Dataset | Accuracy | Notes |

|---|---|---|---|

| Linear Regression | Synthetic | Low MSE | Converged to true parameters |

| Logistic Regression | MNIST | ~85% | Single-layer performance |

| MLP (2-layer) | MNIST | ~95%+ | Significant boost in accuracy |

This project highlights the value of building neural networks from first principles. By implementing linear models, logistic classifiers, and MLPs manually, we gained insight into architecture, loss functions, and optimization techniques.

Key takeaways:

This project serves as a learning bridge from theory to practice and is ideal for anyone aiming to strengthen their deep learning foundations.