This project, created for the AI Alignment Course - AI Safety Fundamentals powered by BlueDot Impact, leverages a range of advanced resources to explore key concepts in mechanistic interpretability in transformers.

I would like to express my gratitude to the AI Safety Fundamental team, the facilitators, and all participants in the cohorts that I had the opportunity to contribute to developing new ideas in our discussions. I am pleased to be a part of this team.

This repository contains two projects aimed at enhancing the mechanistic interpretability of transformer-based models, specifically focusing on GPT-2. The projects provide insights into two critical aspects of transformer behavior: Induction Head Detection and QK Circuit Analysis. By understanding these mechanisms, we aim to make transformer models more transparent, interpretable, and aligned with human values.

GPT-2, based on the Transformer architecture and developed by OPenAI, has been trained on a large dataset of internet text. It is an LLM developed to predict the next word in a sentence.

TransformerLens is designed to interpret and analyze the inner workings of Transformer-based models like GPT-2. It allows researchers to investigate specific layers, attention heads, and other internal components of the model to understand better how it processes information and makes decisions. It provides functionality to access and manipulate various aspects of the model, such as activation caches, query key (QK) circuits, and induction heads, but also makes it easier to visualize and interpret the model's behavior.

This project used these two tools together.

Induction heads are specialized attention heads within transformer models that help maintain and repeat sequences during in-context learning. This project focuses on identifying these heads and visualizing their behavior in repetitive sequences.

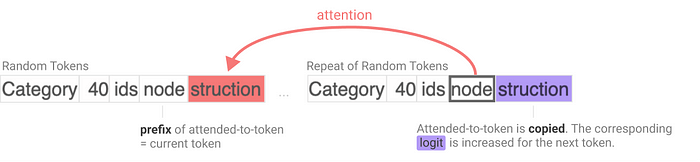

The way an induction head in transformer models pays attention to repeated patterns in a sequence is presented in the image. When a sequence of tokens is repeated, the induction head notices the repetition. Thus, it shifts its attention to the corresponding token in the previous sequence, and the probability of predicting the next token based on the previously attended pattern, i.e., logit, increases. This mechanism helps the model to remember and repeat sequences during in-context learning.

pip install transformer-lens circuitsvis matplotlib seaborn

Execute the Script:

Run the qk_circuit_analysis.py script to extract and visualize QK interactions for the provided sample sequences.

Modify Input Sequences:

Change the sequences variable in the script to analyze your own text sequences for QK circuit interactions.

The script generates attention heatmaps showing induction head activity for each sequence, providing a visual representation of how the model captures and retains context.

QK (Query-Key) circuits are fundamental to how transformers allocate attention among tokens in an input sequence. This project focuses on analyzing and visualizing QK interactions to understand how transformers prioritize information.

It shows how QK (Query-Key) circuits in transformer models attend to different tokens according to their relevance. The attention pattern is visualized as moving information from the "key" token to the "query" token, affecting the model's prediction for the next token, the logit effect. This mechanism shows how attention is directed to specific words, thus affecting how the model processes and predicts the following tokens in a sequence.

pip install transformer-lens circuitsvis matplotlib seaborn

qk_circuit_analysis.py script to extract and visualize QK interactions for the provided sample sequences.The script generates QK interaction heatmaps for each sequence, highlighting how attention is distributed among tokens based on the model's Query and Key matrices.

Clone the repository and install the necessary dependencies:

git clone <repository-link> pip install -r requirements.txt

induction_heads or qk_circuits).induction_head_detection.py for Induction Heads.qk_circuit_analysis.py for QK Circuits.📣 Exciting News!

💌 Don't miss a single update from me!🚀

🔔 Subscribe now to receive instant email alerts whenever I publish new content.

Join the community of learners and stay ahead together!📚

Contributions are welcome! Please open an issue or submit a pull request if you have suggestions or improvements.

For any questions or inquiries, please contact [ayucekizrak@gmail.com].