Abstract

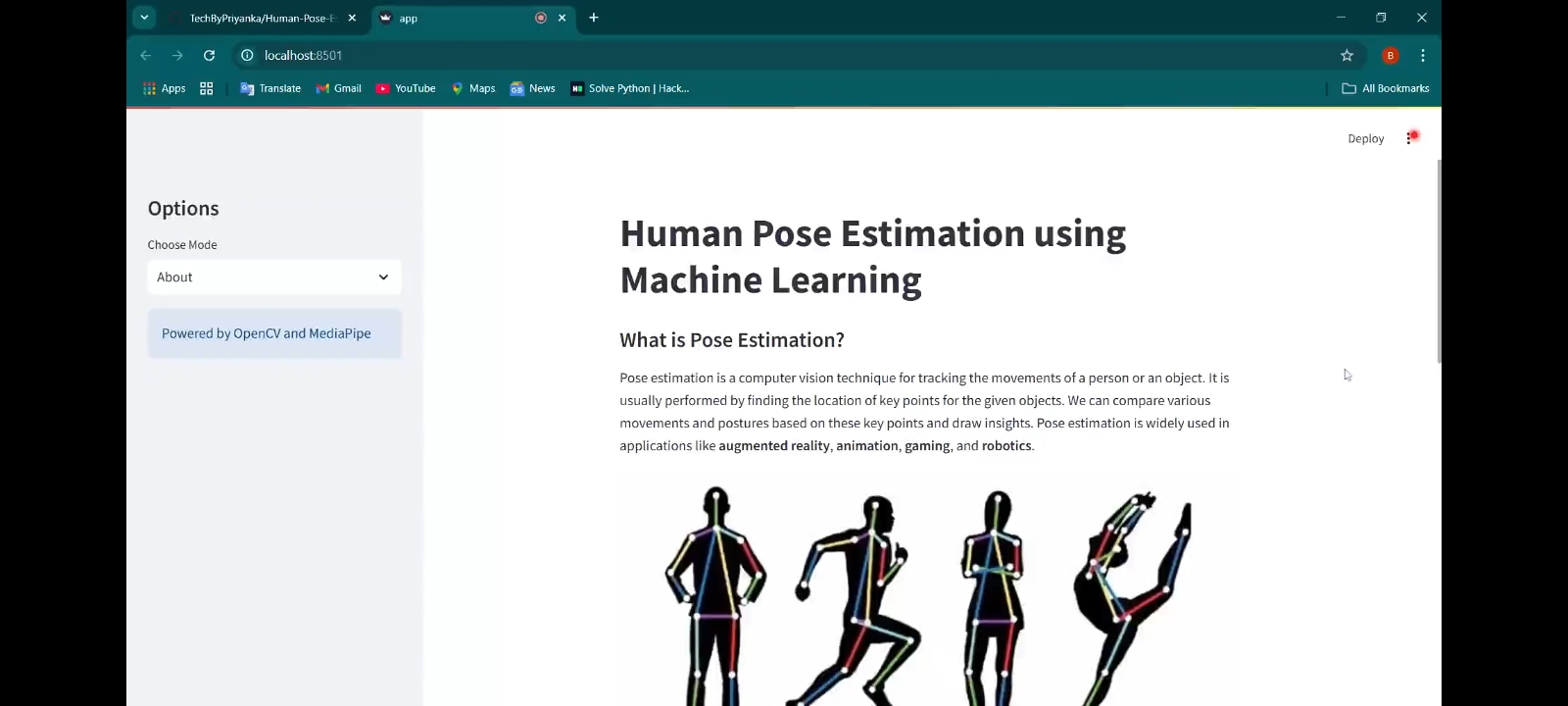

Human pose estimation is a computer vision technique that tracks human movements by identifying key points such as shoulders, elbows, and knees. This technology addresses challenges in accurately detecting human poses for applications like fitness tracking, healthcare monitoring, gaming, and augmented reality.

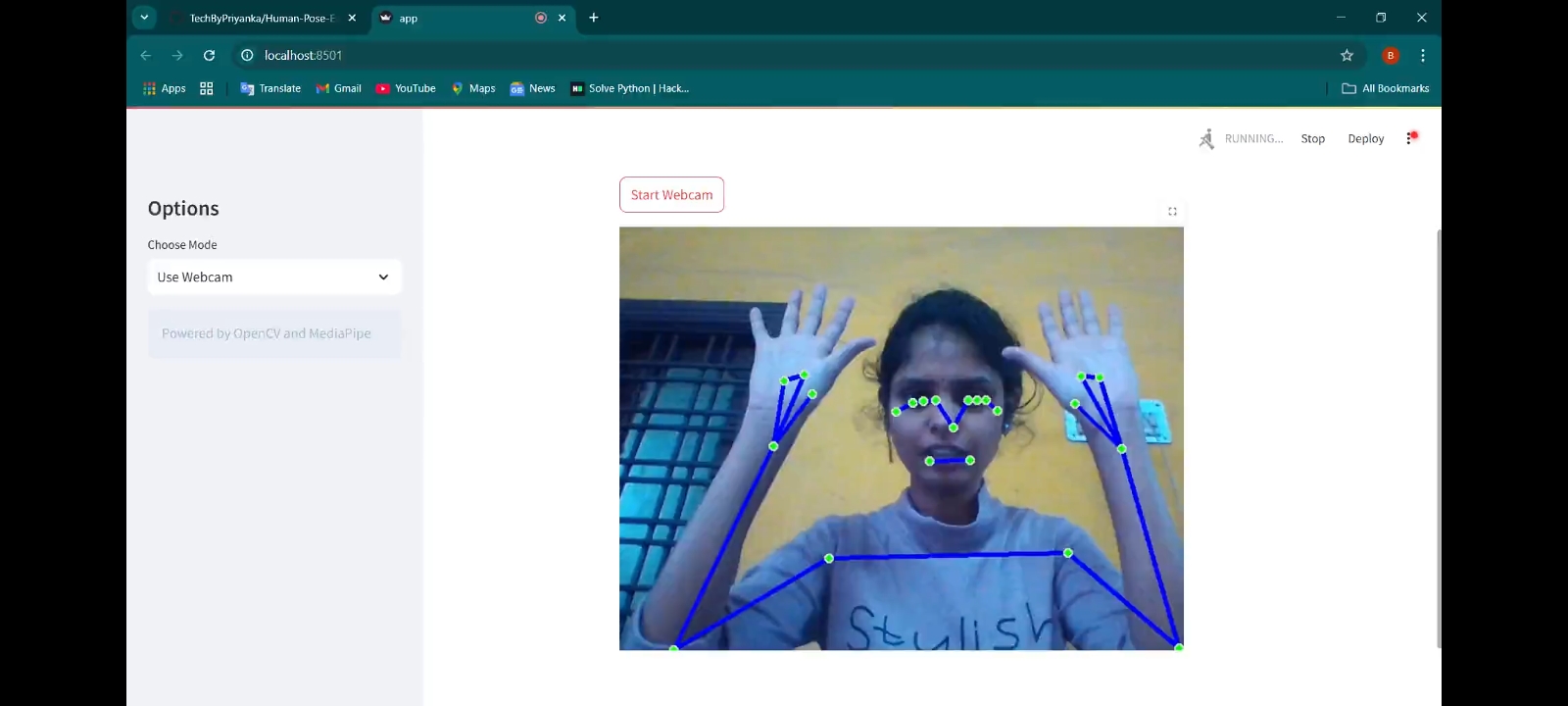

Existing systems often face limitations in accessibility, usability, or computational efficiency.This project aims to develop a simple and efficient pose estimation tool using MediaPipe and OpenCV.

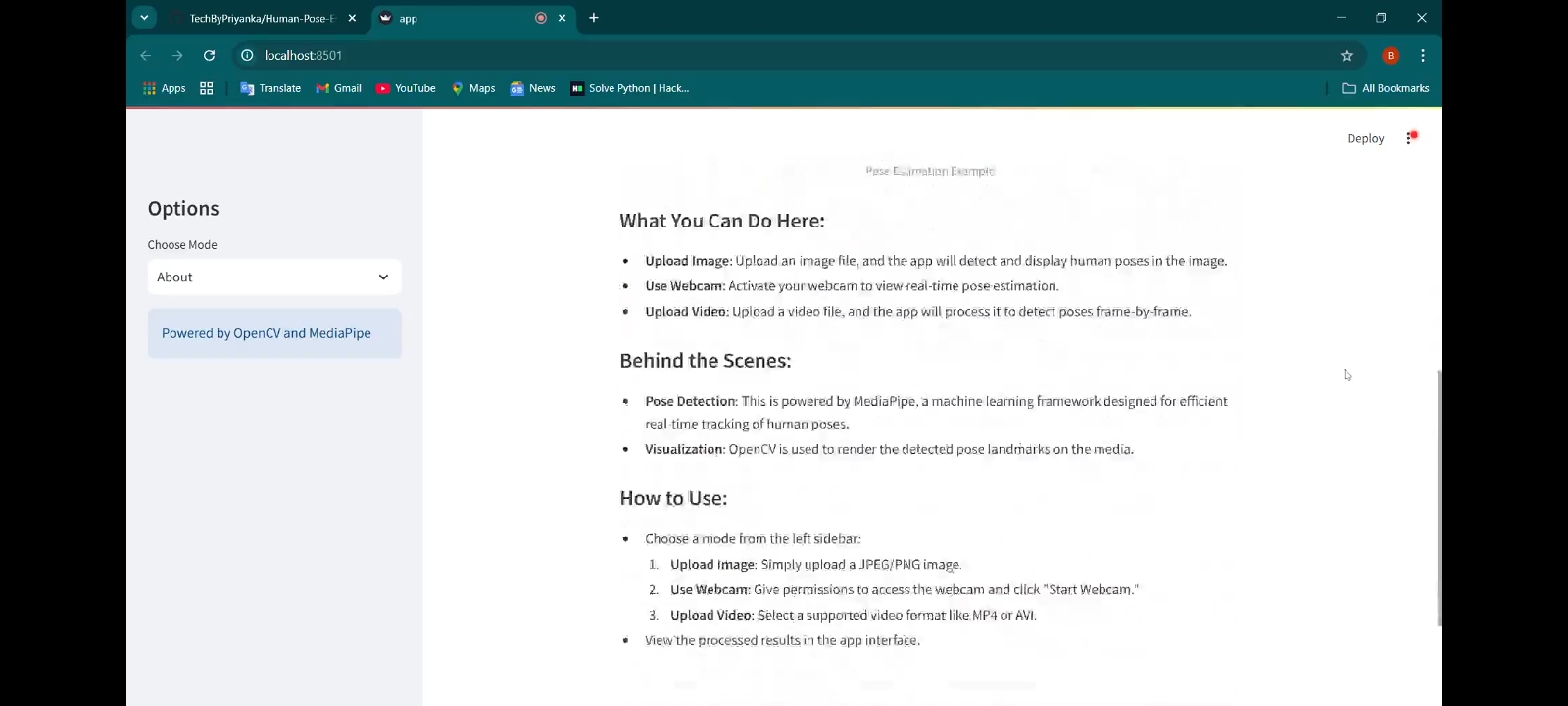

The objectives include enabling the detection of poses in static images, analyzing video inputs frame-by-frame, and performing real-time pose estimation using a webcam. Additionally, a user-friendly interface is designed with Streamlit to allow seamless media uploads and live webcam usage.The methodology leverages MediaPipe for fast and precise pose detection, identifying key landmarks, and OpenCV for rendering and visualizing results clearly.

The tool successfully combines these technologies to deliver an interactive and lightweight solution that addresses real-world challenges. Key results demonstrate accurate pose detection across various input modes and a responsive interface that ensures ease of use.

In conclusion, this project bridges the gap between advanced computer vision techniques and practical applications, providing a strong foundation for further development. Future enhancements could include multi-person detection, activity recognition, and optimization for mobile use. The tool showcases how pose estimation can be applied effectively in everyday scenarios.

Methodology

1. Input Layer: Image/Video/Live Webcam Feed

The system takes an input in the form of:

・ A static image uploaded by the user.

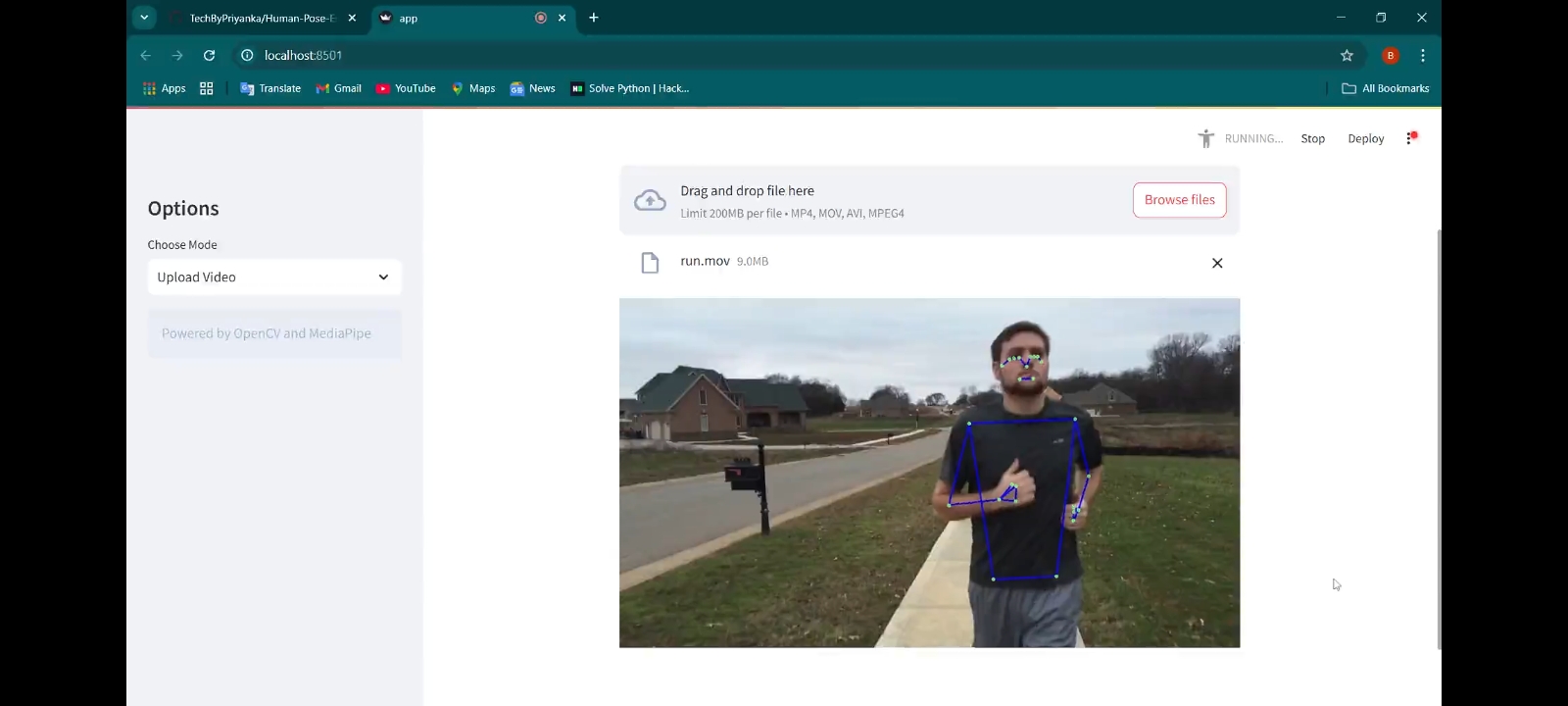

・ A pre-recorded video.

・ A real-time video stream from a webcam.

2. Preprocessing Stage

・Resize and normalize the image/video frame for better detection.

・Remove noise and enhance important features.

・Convert input into a format suitable for the deep learning model.

3. Pose Estimation Model Processing

・Detect key body joints (head, shoulders, elbows, wrists, hips, knees, ankles).

・Utilize deep learning models such as:

・OpenPose

・MediaPipe Pose

・ PoseNet

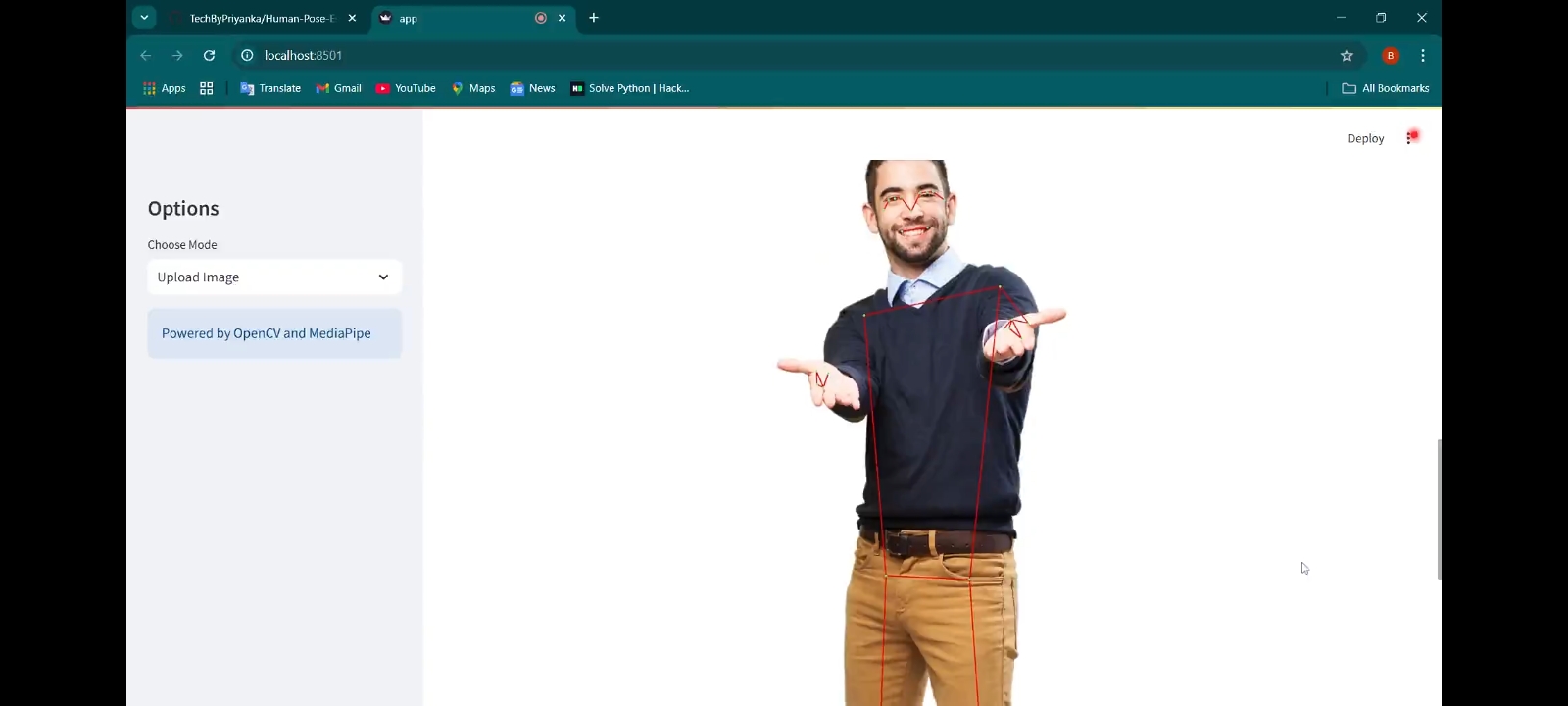

4. Skeleton Formation and Visualization

・ Connect detected key points using colored lines (e.g., green for limbs, red for

joints).

・ The system overlays these skeletal structures onto the original input.

・ Generate a visual output showing detected poses.

5. Result Analysis and Applications

・ Users can analyze movement posture and track key physical attributes.

・ Applications include fitness tracking, sports analytics, motion recognition, and AR.

Result