Abstract

This project presents a robotic hand that mimics human finger movements in real-time using a computer vision-based approach. Utilizing Python with OpenCV and MediaPipe for hand landmark detection, finger positions and angles are determined from video frames. These computed values are sent serially to an Arduino Uno controlling multiple MG90S servos, providing accurate mechanical imitation of the user's hand gestures. Two modes of operation are demonstrated: Biomimic (precise angular tracking) and LinearMimic (binary open/closed gestures).

Introduction

Robotic prosthetics and remote manipulators significantly benefit from intuitive control mechanisms. Integrating computer vision with robotic control provides a natural and responsive human-machine interaction. This project demonstrates how accurate finger tracking through a simple webcam and affordable microcontrollers can effectively drive robotic systems. The presented biomimetic hand leverages Python-based gesture recognition algorithms and Arduino-powered servo mechanisms to replicate human hand motions effectively.

Methods and Implementation

Hardware Setup

-

Arduino Uno

-

MG90S Servos

-

5–6V External DC Power Supply

-

Webcam (or built-in camera)

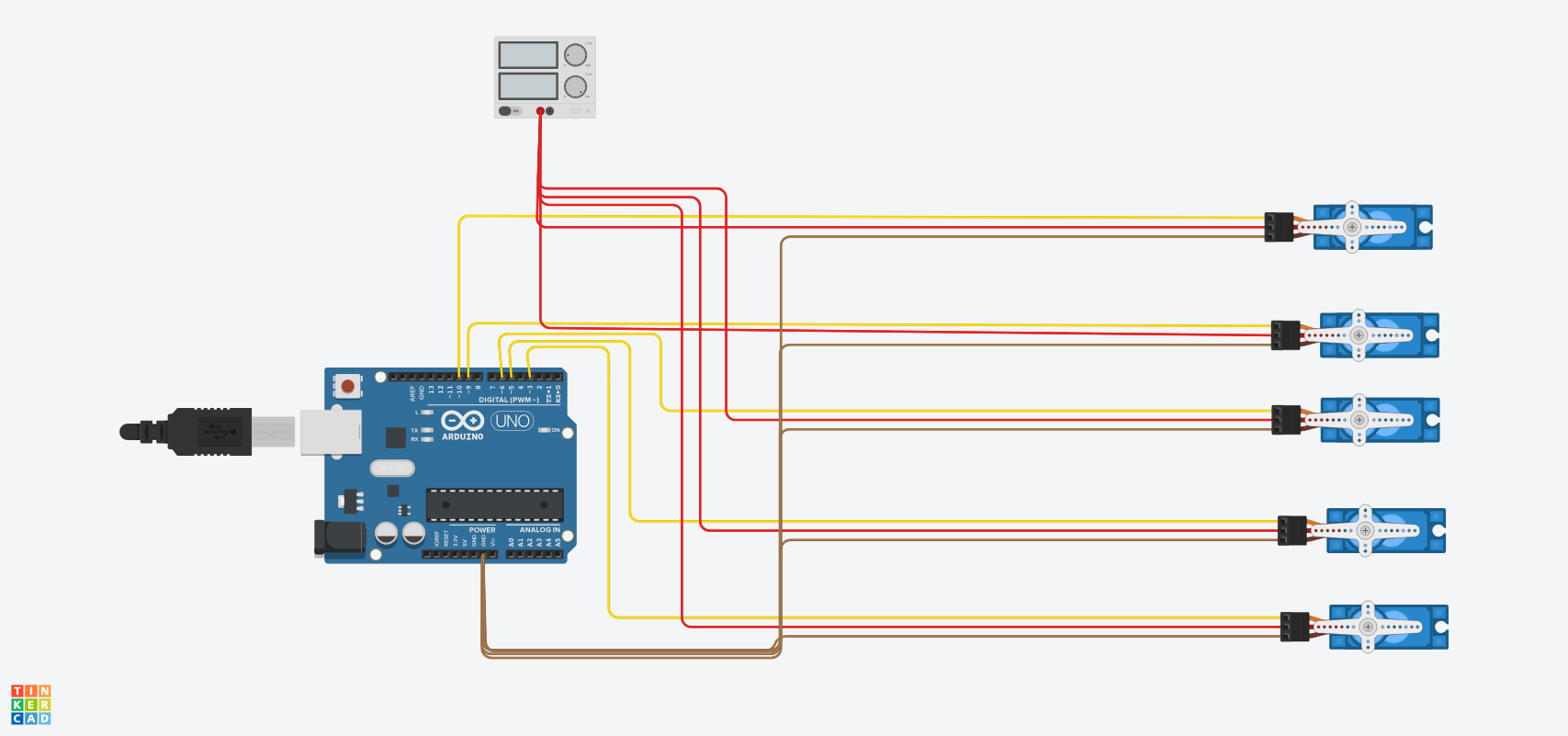

Circuit Diagram

-

Power lines of servos connected to an external 5–6V DC supply.

-

Ground lines of servos and Arduino Uno connected together to common ground.

-

Servo signal lines connected individually to Arduino PWM pins (3, 5, 6, 9, 10).

Software Implementation

Arduino Implementation

Arduino receives real-time servo position data from Python via a serial connection. It processes the incoming angles (ranging from 0°–180°) and immediately actuates corresponding servo motors. This ensures smooth, real-time replication of detected gestures. Two modes—Biomimic and LinearMimic—allow for nuanced motion control or simple binary gestures, respectively.

Python Computer Vision

The project's core functionality relies on Python scripts utilizing OpenCV for video frame management and Google's MediaPipe for sophisticated hand-tracking algorithms. MediaPipe is a machine learning framework designed specifically for real-time tracking of human body landmarks, providing accurate positions of up to 21 key points (landmarks) on a detected hand.

In each video frame captured by OpenCV, MediaPipe identifies landmarks that correspond to specific points on the hand, such as finger joints, knuckles, and fingertips. These landmarks provide normalized x, y, and z coordinates relative to the captured frame. For this application, the focus is primarily on fingertip landmarks (landmark indices: 4, 8, 12, 16, and 20) and their respective DIP joints (indices: 3, 6, 10, 14, and 18).

The Python script processes the relative positions of these landmarks to quantify the gesture of each finger. It calculates the vertical distance between each fingertip and its respective DIP joint to determine finger extension. Depending on the mode, this calculation either results in continuous angular positioning (Biomimic mode) or binary open/closed detection (LinearMimic mode). After determining the appropriate angles for each finger, these numerical values are serialized into a comma-separated string and transmitted to the Arduino over a serial connection.

Internal Mechanism of CV Algorithm

At its internal core, the computer vision algorithm involves several integrated processes. First, the video frame is continuously captured from the camera device using OpenCV’s video capture method, typically at a rate of 30 frames per second. To facilitate natural and intuitive interaction, frames are horizontally flipped, providing a mirrored image consistent with user perception.

Each flipped frame undergoes color-space conversion from BGR to RGB format, which is necessary for compatibility with MediaPipe’s hand detection model. MediaPipe applies an advanced neural-network-based model to each RGB frame, efficiently identifying the position and orientation of the hand landmarks within milliseconds. The detected landmarks are structured as normalized coordinates ranging from 0 to 1, relative to the frame size, allowing easy calculation of positional relationships.

Specifically, for finger movement detection, the algorithm examines vertical landmark displacement. For instance, the vertical difference between each fingertip and its respective DIP joint is computed. In the Biomimic mode, this vertical displacement is normalized against a calibrated factor (experimentally determined around 0.2) to map the fingertip-DIP displacement into servo angles ranging from 0 to 180 degrees. Smaller displacements represent a more open finger, resulting in smaller angles (close to 0°), while greater displacements indicate a closed or bent finger, corresponding to angles closer to 180°.

Conversely, LinearMimic mode simplifies this displacement analysis by establishing a fixed threshold. If the fingertip is significantly above the DIP joint by a predetermined threshold (e.g., 0.02 normalized units), the finger is considered open (servo angle set at 0°). Otherwise, it's considered closed (servo angle at 180°). This mode reduces computational overhead and latency, providing quick, binary gesture recognition suitable for simplified controls or interactions.

After these computations, the angles for all five fingers are packaged into a formatted string message. This message is then transmitted via a serial connection to the Arduino, which directly translates these commands into physical servo rotations.

Results and Discussion

Testing demonstrated robust and responsive tracking performance. Biomimic mode provided precise and fluid replication of finger movements, ideal for sophisticated robotic control scenarios or assistive technologies requiring detailed gesture interpretation. LinearMimic mode proved highly effective for basic interactions, rapidly responding to open/closed gestures.

However, some limitations were noted, primarily slight delays (approximately 100 ms latency), largely influenced by webcam processing speed and system resource constraints. Optimal performance required clear lighting conditions and steady camera placement.

Conclusion and Future Directions

The developed robotic hand successfully integrates computer vision with microcontroller-driven servos, effectively replicating human gestures. Future improvements could include optimization of video processing pipelines, use of faster detection models, integration of multiple sensors to enhance reliability, and wireless serial communication for enhanced user freedom.

References

MediaPipe Framework

OpenCV Documentation

Arduino Servo Library Documentation

Appendix: Project Files

Arduino and Python scripts available on the project GitHub repository