This publication presents an agentic AI system designed to automate insurance policy cancellation workflows using a multi-agent architecture. The system coordinates specialized agents responsible for intent recognition, policy validation, eligibility assessment, and execution, enabling efficient and scalable decision-making.

Built with a modular and extensible design, the solution combines rule-based validation with large language model (LLM)-driven reasoning to handle complex, real-world scenarios. The architecture improves transparency, maintainability, and adaptability compared to monolithic automation approaches.

This work demonstrates how multi-agent systems can enhance operational efficiency, reduce manual intervention, and provide a scalable foundation for enterprise AI automation in the insurance domain.

The manual processing of insurance policy cancellations is often slow, error-prone, and resource-intensive, requiring careful verification of policy details, eligibility checks, and calculation of refunds. To address these challenges, this project introduces a Multi-Agent Insurance Policy Cancellation System, which automates the end-to-end workflow while maintaining reliability, traceability, and compliance with business rules.

Purpose

The primary purpose of this system is to streamline and automate the cancellation process for insurance policies. By leveraging multiple specialized AI agents, the system reduces human effort, minimizes errors, and ensures consistent application of business rules throughout the workflow.

Objectives

The system is designed to achieve the following objectives:

Overall, this system uses Large Language Models (LLMs), tool integrations, validation guardrails, test strategies and workflow orchestration to process user requests safely and generate structured cancellation outcomes properly. Using mock data stored in CSV files, multiple specialized agents collaborate to validate policy details, evaluate cancellation eligibility based on predefined business rules, calculate refundable amounts, log refund records, and generate a professional cancellation notice in PDF format. In addition, in order to make the system more user-friendly and interactive, the system is deployed with a Streamlit UI to achieve the purpose.

This system is an AI-driven, multi-agent workflow engine that processes insurance cancellation requests in a structured, validated, and secure manner.

Problem Statement

Traditional insurance cancellation system involves a lot of manual efforts and requires manual data verification, also increases high risks of inconsistent validation. Using this agentic AI system can help solve automated structured intake of cancellation requests, intelligent validation and policy verification, risk and compliance checks, safe and auditable decision workflows.

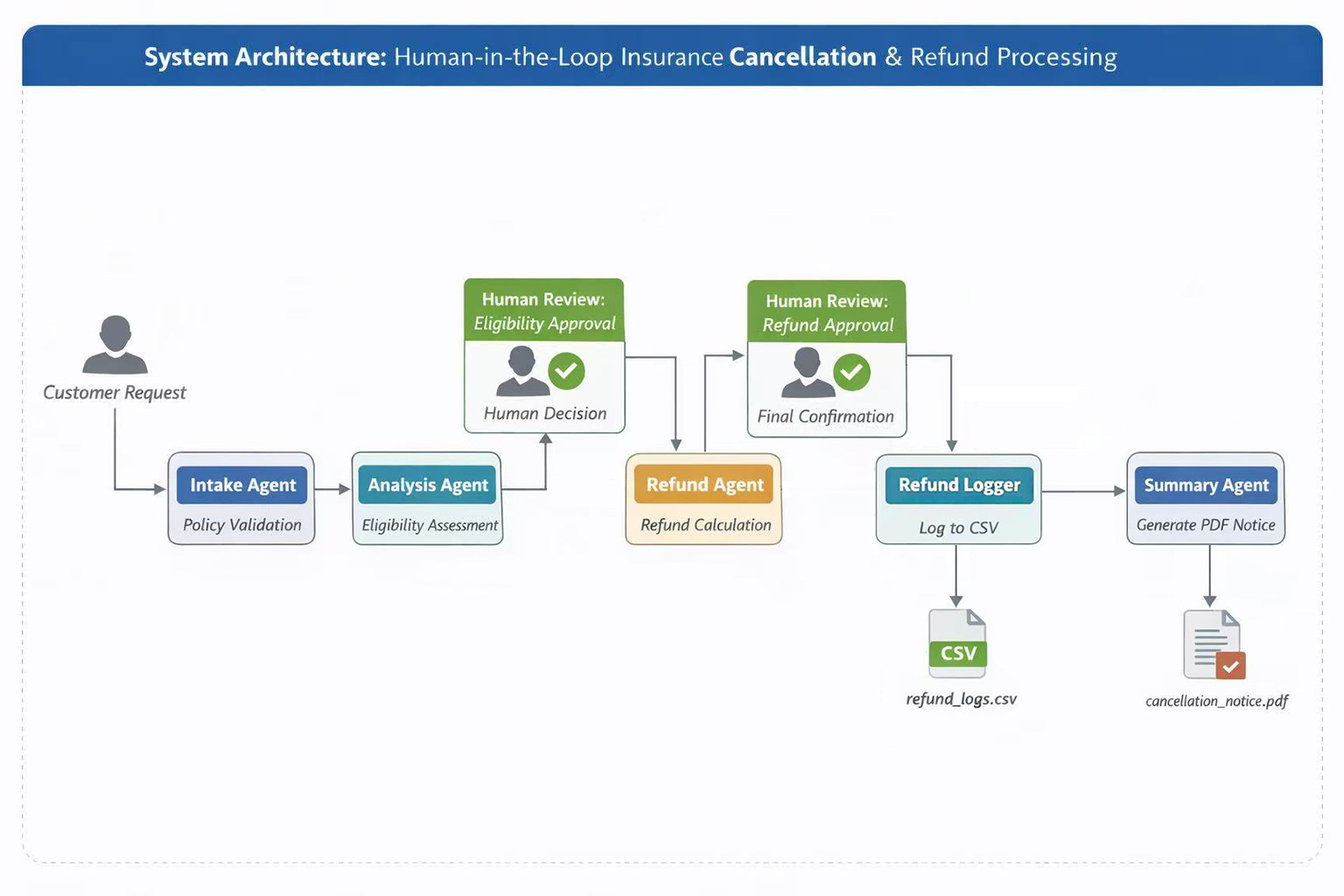

Architecture Diagram

Core Components

This system uses a multi-agent, tool-augmented LLM architecture designed to safely automate structure insurance cancellation workflows.

Rather than relying on a single monolithic prompt, the system decomposes the workflow into role-specialised agents, deterministic routing login, tool-augmented reasoning, and guardrails-enforced structured outputs.

To achieve the success of policy cancellation, the process is determined using the following rules:

Only policies that satisfy all rules are eligible for cancellation and refund processing.

This project uses mock data, all customer and policy data is stored as a CSV file named insurance_policies.csv. Link for the dataset is below:

https://github.com/jingozuo/AAIDC_Project3_JZ/blob/main/data/insurance_policies.csv

Data Lookup Tool

This custom data lookup tool is used to search and validate the existence of the policy by query a mock CSV dataset.

Check Cancellation Eligibility Tool

The tool is designed to evaluate whether a policy is eligible for cancellation based on the policy's status, payment and dates.

Refund Calculator Tool

Once the policy is confirmed that is eligible for refund, this tool is used to compute refund amount from policy dates and payment only.

Refund Log Tool

This tool is helpful to append one new refund record to the output CSV file as an evidence.

Notice Generator Tool

The last step for processing insurance cancellation is to generate a PDF notice with all relevant details for the user.

{ "policy_number": "string", "first_name": "string", "last_name": "string", "start_date": "date", "end_date": "date", "policy_status": "string", "payment_amount": "string", "is_payment_made": "boolean" }

If the policy number provided can be found in the mock dataset, the agent will retrieve the policy details for the customer and confirm the customer if policy details are correct or not. If the policy number can't be found, the agent will request the customer to enter the correct policy number.

Analysis Agent

Analysis agent is responsible for determining if the policy is eligible for cancellation. It uses Check Cancellation Eligibility Tool to to check whether the policy is active, whether the payment has been made, and whether the current date is before the policy end date.

Refund Agent

Refund agent can help automatically compute refund amount from the policy by using Refund Calculator Tool. Refund are calculated using a pro-rata approach based on the remaining policy duration and the total payment amount.

Logger Agent

Logger agent persists approved refund decisions into a CSV file stored in the output directory. This agent uses Refund Log Tool, a custom CSV logging tool, and is executed only after human approval of the refund. Logged data includes:

The system also involves human-in-the-loop interactions as key decision boundaries within the flow to enhance safety, accountability, and trust. There're two human-in-the-loop checkpoints implemented in the workflow.

Human Review – Eligibility (HITL)

This checkpoint is after the Analysis Agent. A human reviewer examines the eligibility decision and explicitly approves or rejects the policy cancellation. Once the human reviewer approves the eligibility check, the workflow can proceed to Refund Agent, otherwise, the workflow terminates safely.

Human Review – Refund (HITL)

A human review checkpoint is added after Refund Agent, ensuring that financial outcomes are verified before any permanent record is created. Only when the refund is approved does the workflow proceed to Logger Agent, otherwise, the workflow terminates safely.

DeepEval method is used in this project to evaluate the insurance cancellation workflow. Evaluation includes five dimensions:

The system implements a multi-layered safety architecture combining:

The system enforces strict behavioral constraints directly inside prompt_config.yaml.

It is from "intake_assistant_prompt".

Behavioral Restrictions:

Security Benefits:

It is from "summary_assistant_prompt".

Explicit Constraints:

Security Benefits:

The system implement input validation and sanitization inside guardrails_safety.py.

LLM-generated summaries are validated using validate_notice_output().

Validations performed:

The system is designed to handle errors safely and continue running when possible, while also logging problems so they can be traced later.

The system uses retry mechanism for temporary failures. If certain operations fail (such as calling tools or the AI model), the system will automatically retry up to 3 times. The retry logic is used for:

All guardrail events are logged in file guardrails_compliance.jsonl.

The log records events include:

Each log entry contains structured information such as:

Example:

{"timestamp": "2026-03-01T22:23:31.894994+00:00", "event_type": "output_validation", "stage": "summary", "message": "Notice text validated", "validated": true}

Security Benefits:

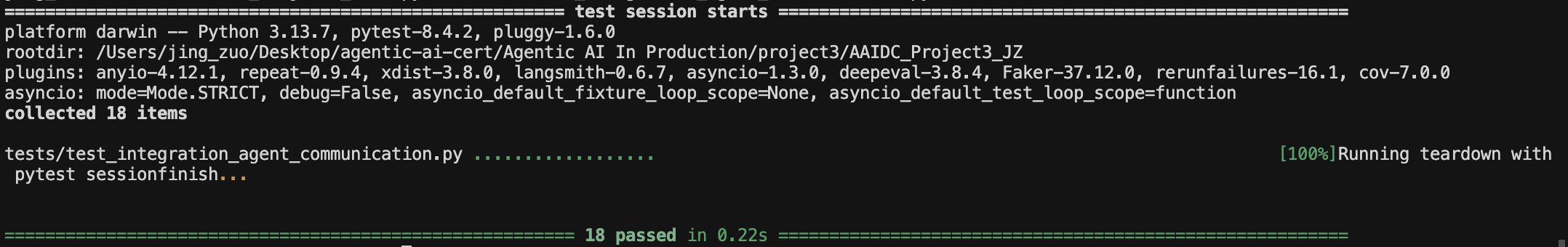

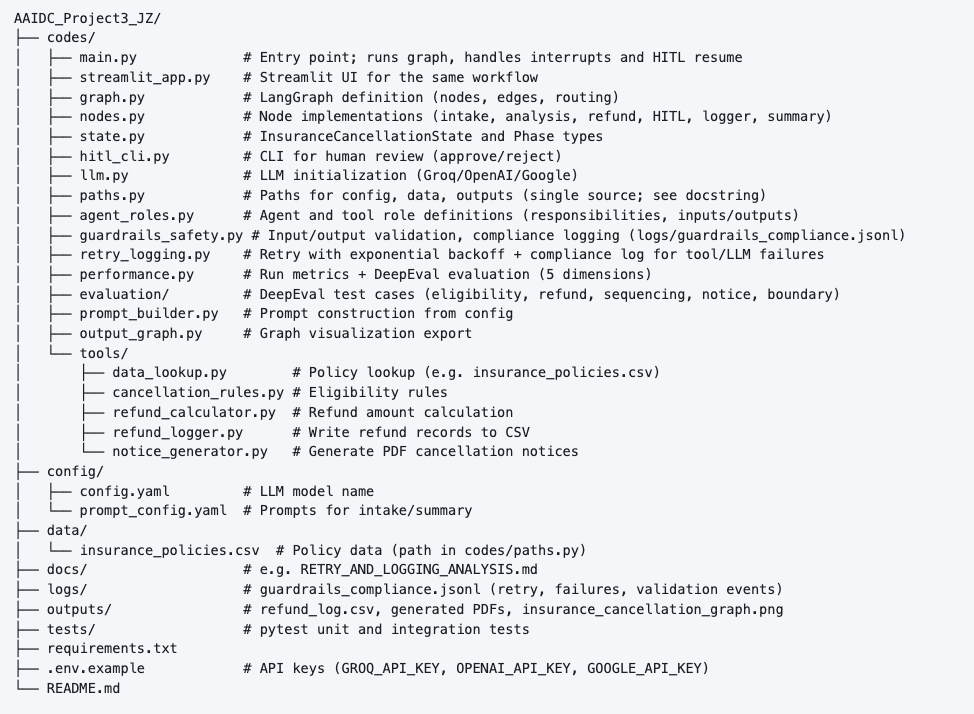

The system implements a structured, The system implements a structured, production-grade testing strategy using pytest, organized into:

The test suite is modular and maps directly to system components.

conftest.py run_tests.py test_e2e_system_flows.py test_health.py test_integration_agent_communication.py test_nodes.py test_prompt_builder.py test_retry_logging.py test_tools_cancellation_rules.py test_tools_data_lookup.py test_tools_notice_generator.py test_tools_refund_calculator.py test_tools_refund_logger.py test_utils.py

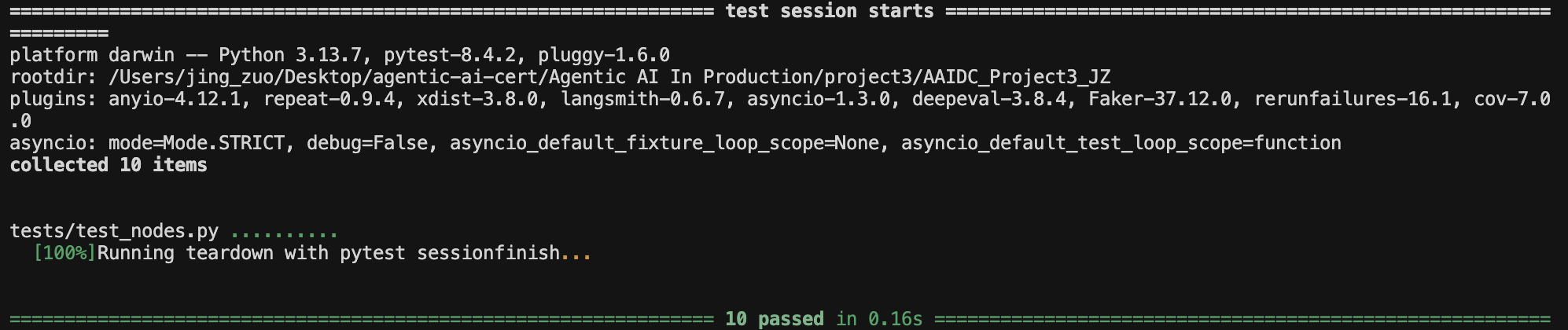

Agent Node Test

The test validates each individual agent node functions, execution logic, output schema compliance, proper state transitions, and failure handing inside nodes. This test ensures each agent produces structured format, and invalid inputs are rejects.

Result:

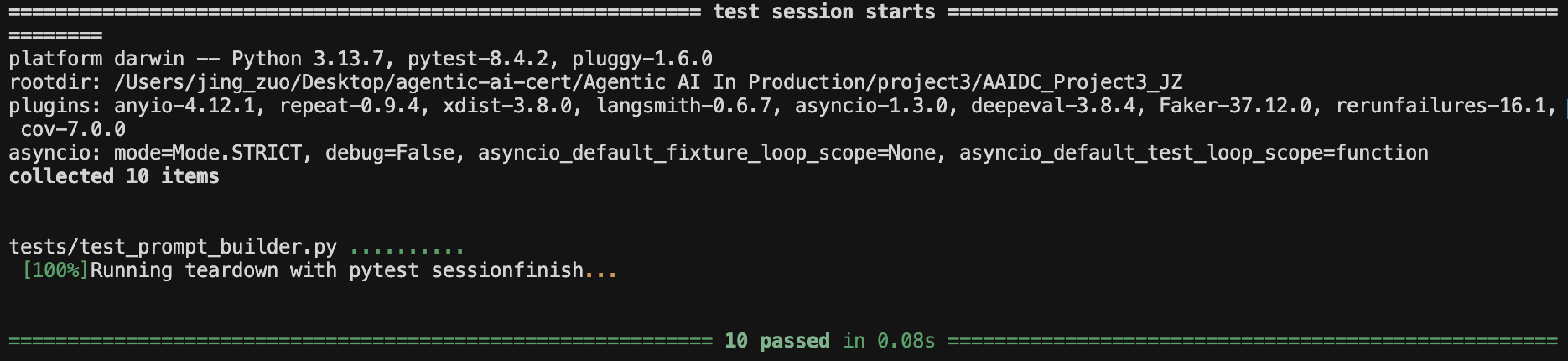

Prompt Construction Test

The test validates whether prompt templates are correctly formatted, whether required system instructions are included, and whether JSON schema constraints are injected. The purpose is to prevent prompt drift and regression when modifying templates.

Result:

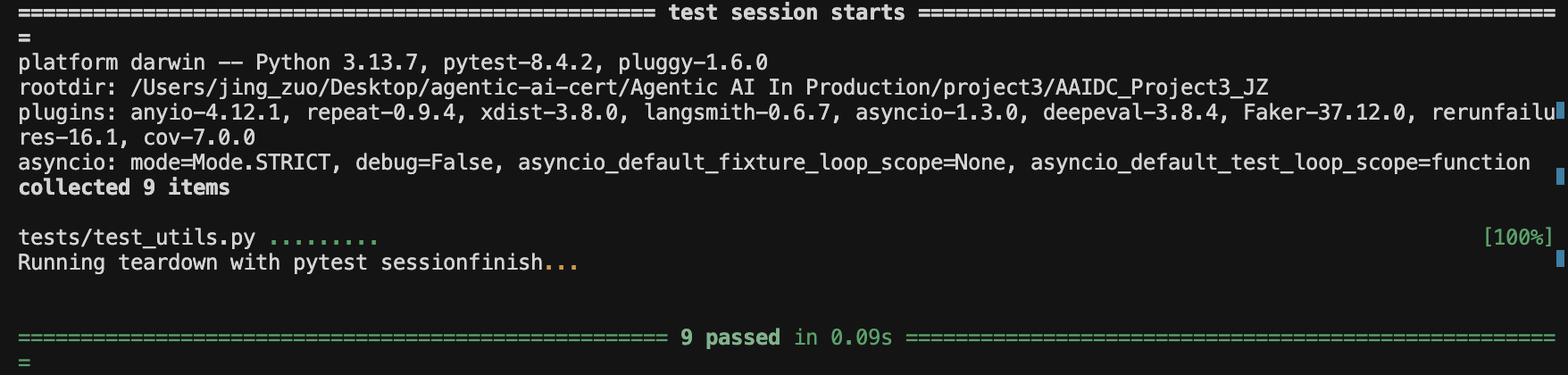

Tool-Level Unit Tests

Each custom tool is tested independently:

3.1. test_tools_data_lookup.py

3.2. test_tools_cancellation_rules.py

3.3. test_tools_refund_calculator.py

3.4. test_tools_refund_logger.py

3.5. test_tools_notice_generator.py

The test validates orchestrator routing logic, agent-to-agent handoffs, tool invocation sequencing, state passing consistency, multi-agent chain, HITL resume handoffs. This test ensures agents communicate correctly, no schema corruption across steps, and deterministic routing logic flows.

Result:

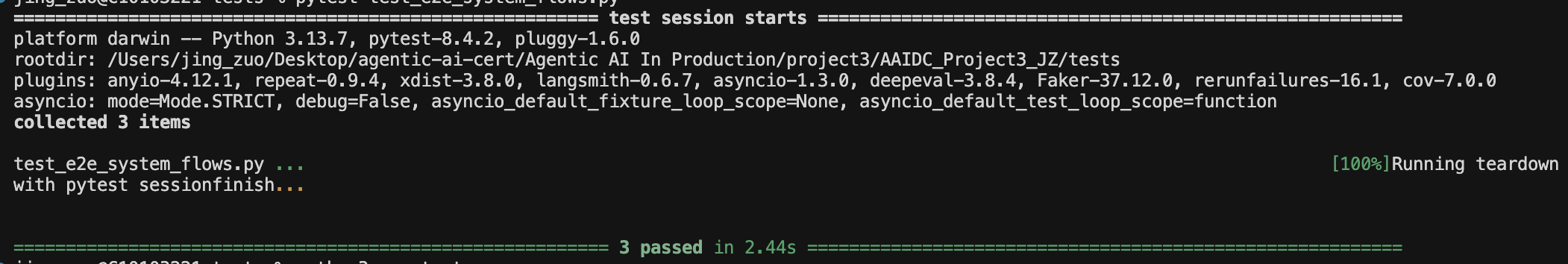

End-to-end test runs the full insurance cancellation graph (intake → analysis → HITL → refund → HITL →

logger → summary) with mocked user input, HITL decisions, and LLM. Verifies that

the entire flow completes and produces expected outputs (refund log, PDF notice).

Result:

git clone https://github.com/jingozuo/AAIDC_Project3_JZ.git

python -m venv venv source venv/bin/activate

pip install -r requirements.txt

cp .env.example .env

llm_model: llama-3.3-70b-versatile

python codes/main.py

Or from the codes directory:

cd codes && python main.py

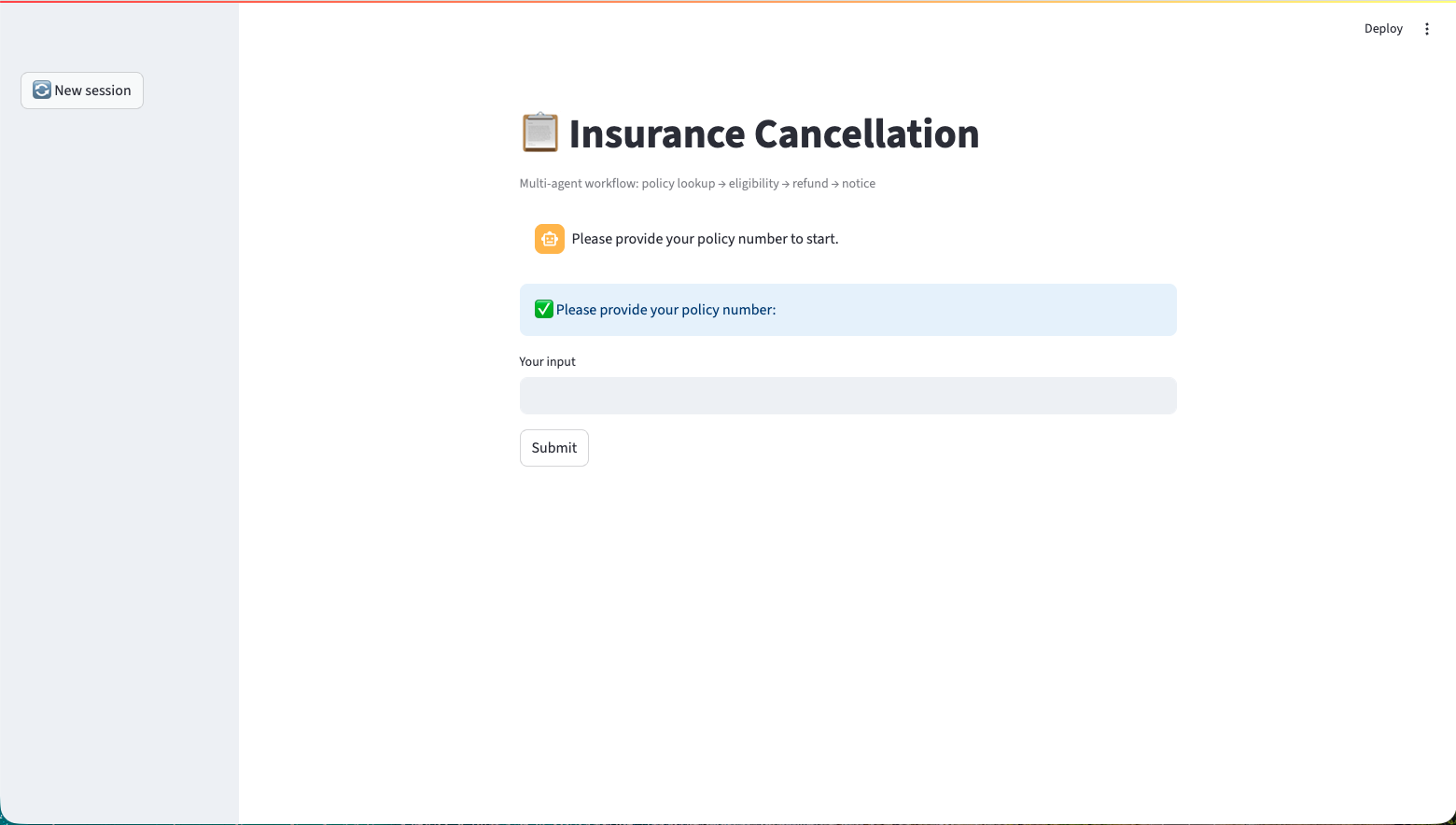

streamlit run codes/streamlit_app.py

pytest tests/test_e2e_system_flows.py

Run a single test, e.g. test_tools_data_lookup.py

pytest tests/test_tools_data_lookup.py

The system uses Streamlit to provide an interactive conversational workflow. The UI follows a step-based stateful flow, where the session state determines the next prompt and available user actions.

The interface designed includes:

A demonstration video is attached to illustrate the full interaction flow.

The workflow proceeds as follows:

The system implements a centralized retry, timeout, and logging mechanism to ensure production-grade resilience and operational traceability.

Operations that depend on external services—such as tool calls and LLM invocations—are executed through the call_with_retry() wrapper. This mechanism provides:

This design improves system reliability while ensuring all operational events are recorded for monitoring and debugging.

If an operation fails, the system waits based on the backoff rule and retries. If all retry attempts fail, the retry is marked as exhausted, and fallback handling is triggered.

2.1. Retry Attempt Timeout:

The LLM initialization function (get_llm in codes/llm.py) supports an optional request_timeout parameter. This value limits how long the system waits for an LLM response before terminating the request.

The timeout can be configured in: config/config.yaml

Both the main application and the Streamlit interface read this value and pass it to the LLM client.

2.2. LLM Request Timeout:

The retry wrapper (call_with_retry) also supports a per-attempt timeout.

Each operation attempt runs in a separate thread. If execution exceeds the specified timeout:

3.2. Retry Exhausted - If all attempts fail:

3.3. Safe Fallback Mechanism

If the system encounters conditions such as:

The system returns a predefined safe message:

"Your insurance cancellation has been processed. Please retain this notice for your records."

This can help prevent the system crash, and ensure no exposure of partial or corrupted LLM output.

Events captured include:

This centralized logging design enables:

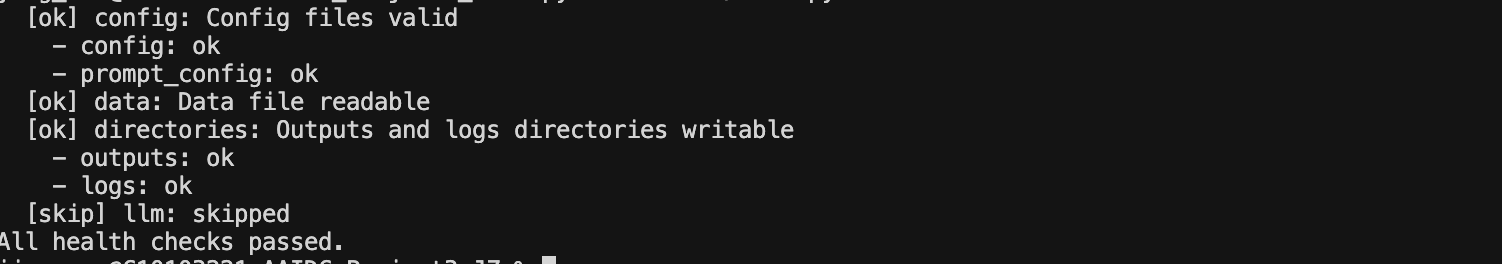

Health Check includes:

5.1. Configuration files:

5.2. Data file:

5.3. Directories:

Run the health check:

python codes/main.py --health

The output looks like:

These checks verify your configuration, data files, folders, and optional AI model connectivity.

The troubleshouting guide helps find and fix common problems when running the insurance cancellation system, whether using the command line, Streamlit app, or tests.

There are common problems listed below and how to fix them:

Overall, check your config files, data files, and API keys first, then look at the logs for retry or fallback events. The system will try to recover automatically when possible.

The Multi-Agent Insurance Cancellation System is a production-ready AI workflow designed to automate policy cancellation in a safe, structured, and auditable manner. The system combines modular multi-agent orchestration, strict JSON schema enforcement, Guardrails-based validation, and centralized retry and logging mechanisms to ensure reliability and compliance.

By enforcing deterministic routing, input sanitization, output filtering, and exponential backoff retry logic, the system minimizes hallucination risk, prevents malformed outputs, and gracefully handles failures without disrupting the user experience. All critical events — including validation checks, retry attempts, and fallback executions — are captured in structured compliance logs to support traceability and operational monitoring.

Comprehensive unit, integration, and end-to-end testing further ensures workflow integrity and resilience under real-world conditions.

Overall, the system demonstrates a robust approach to building secure, maintainable, and enterprise-ready agentic AI applications, prioritizing safety, reliability, and transparency over uncontrolled autonomy.